A Founder's Guide to Website Conversion Optimization

Getting traffic is easy. Turning it into revenue? That's the hard part. If your conversion rate feels painful, you're not alone. This is where website conversion optimization comes in. It’s the craft of turning more visitors into customers, whether that means making a purchase, signing up, or booking a demo. Waiting weeks for a data analyst to build a dashboard is a relic of the past. Let's fix your leaky revenue bucket, starting now.

TL;DR: Your Quick Guide to Better Conversions

Stop Guessing: Your website is leaking money. Use data to find the biggest cracks in your user experience first.

Ask, Don't Analyze: Ditch the slow, complex BI tools. A conversational AI data analyst like Statspresso lets you ask questions and get charts in seconds.

Prioritize Ruthlessly: You can't test everything. Use a framework like PIE or ICE to focus on experiments with the highest potential impact.

Segment Your Results: A test "winner" for one audience can be a loser for another. Dig into mobile vs. desktop, or new vs. returning users to find the real story.

Start Now: Connect your data to Statspresso. Skip the SQL. Just ask your data a question and get a chart in seconds.

Your Website Is Leaking Money. Let's Fix It.

Think of your unoptimized website as a leaky bucket. You pour traffic in the top, but potential revenue is constantly dripping out through cracks in the user experience. This guide isn't about throwing spaghetti at the wall or copying competitors. We're going through a disciplined, data-first approach to boosting your conversion rate.

The old way meant waiting weeks for an over-burdened analyst to build a dashboard. That's a relic. To stop the bleeding and seriously improve ecommerce conversion rate, you need to get smarter about turning your existing traffic into money.

Ditch the Dashboards and Start Asking Questions

Data should be your closest ally, but most teams are drowning in it. You've got sales numbers in Shopify, customer profiles in HubSpot, and product analytics locked away in a Postgres database. The problem? Getting simple answers feels impossible without writing SQL or begging your data team for a report.

This is where a conversational AI data analyst changes the game. Instead of filing a ticket and waiting, you can just ask a question and get an answer—with a chart—in seconds.

Key Takeaway: The point of conversion optimization isn't chasing small wins. It's building a system that constantly finds and plugs the biggest leaks in your revenue funnel. That system needs instant data access.

This modern approach means you skip the technical bottlenecks and get straight to insights. Imagine connecting your data and immediately having clarity to:

Pinpoint Friction: Find the exact step in your checkout where most users are dropping off.

Understand Behavior: See which marketing channels bring in your highest-value customers.

Validate Ideas: Get quick data to back up a hypothesis before building an A/B test.

With a tool like Statspresso, your conversational AI data analyst, you just ask questions in plain English. Instead of piecing together a complex report, just ask for what you need.

Try asking Statspresso: "Show me the conversion rate from 'add to cart' to 'purchase' over the last 60 days, broken down by device type."

This immediate feedback loop lets you move faster, make smarter decisions, and finally start plugging the leaks in your revenue bucket.

Setting Goals That Actually Matter for CRO

Before you run a single A/B test, let's talk goals. Countless CRO programs spin their wheels because they start with a vague wish instead of a concrete strategy. Saying you want "more conversions" isn't a strategy—it's a hope.

Real conversion optimization drives tangible business outcomes, not just a higher percentage on a dashboard. You have to move past vanity metrics and zero in on Key Performance Indicators (KPIs) that signal a healthier business. The goal isn't just to bump a number; it's to improve metrics with dollar signs attached.

Macro vs. Micro Conversions: The Big Wins and the Small Nudges

Not all user actions are equal. Understanding the difference is key.

Macro-conversions: These are your primary objectives, the actions that directly generate revenue. Think of a completed purchase, a signed enterprise contract, or a paid subscription. These are the home runs.

Micro-conversions: These are the smaller steps a user takes that show they're moving in the right direction. This could be signing up for a newsletter, downloading a case study, watching a product demo, or creating an account. They're leading indicators of future revenue.

It's a mistake to focus only on macro-conversions. A user who downloads a whitepaper (micro) might be the same person who signs a major contract three weeks later (macro). Tracking both gives you a richer understanding of the user journey.

Beyond Clicks to KPIs That Count

Your goals have to be tied to KPIs that reflect real customer value. A higher conversion rate on a low-margin product is fool's gold compared to a slightly lower rate that brings in high-value customers.

Shift your focus to goals connected to metrics like these:

Average Order Value (AOV): Are your experiments encouraging people to add more to their cart?

Customer Lifetime Value (CLV): Are you optimizing for one-time buyers or for loyal customers who come back?

Lead Quality Score: If you're in B2B, are your forms generating noise, or delivering leads your sales team is excited to call?

To establish a baseline, you first need to know where you stand. But pulling these numbers shouldn't require a data science degree. This is precisely where you skip the SQL and just ask your data a question.

This is where a tool like Statspresso, a conversational AI data analyst, comes in. It lets you get these baseline metrics without writing a single line of code. You connect your database, and you get immediate, chart-based answers.

Try asking Statspresso: "What was our average conversion rate for new users from the US last quarter?"

This instant access turns goal-setting from a guessing game into a data-informed process, ensuring every experiment has a clear purpose and a measurable impact.

Finding Where Your Conversion Funnel Leaks

Every conversion funnel has weak spots. Think of it less like a smooth slide and more like a leaky pipe. Your job is to find the biggest cracks first. This is where we stop guessing and start digging into the data to see exactly where users drop off.

You don't need to wrestle with CSV exports or clunky BI tools. The answers are in your data, whether it’s in Shopify, HubSpot, or a Postgres database. You just need to ask the right questions. A high bounce rate on a critical page is often the most obvious culprit—a dead end for potential revenue.

From Page Views to Page Problems

Before you plug leaks, you need to understand the path users are trying to take. Mapping their journey with a clear user flow diagram is a great start. With that map, your analytics will light up the problem spots—pages where visitors arrive, take one look, and hit the back button.

But this goes beyond bounce rate. You're looking for significant drop-offs between key steps:

Landing Page to Product Page: Are people clicking an ad and leaving immediately? Your ad message probably doesn't match the page.

Product Page to Add to Cart: If they view the product but never add it to their cart, your pricing, description, or images might be weak.

Cart to Checkout: This is a classic. A huge drop-off here almost always points to sticker shock from shipping costs or a clunky, untrustworthy cart page.

These aren't mysteries. They're specific, data-backed questions you can answer now.

Diagnosing the "Why" Behind the Drop-Off

Spotting a high bounce rate tells you what is happening. The real work is diagnosing the why. Is it a technical glitch? A design flaw? A messaging problem?

Bounce rates between 26% and 70% directly kill conversions. Keeping visitors engaged isn't a nice-to-have; it's a lifeline for your revenue. This is a core truth in website conversion optimization and a focus of my automated BI work.

What causes people to leave? It usually comes down to a few culprits:

Slow Page Load Speed: Anything over three seconds and you’re losing customers. Simple as that.

Confusing Value Proposition: If they can't figure out what you do in five seconds, they're gone.

Poor Mobile Experience: Your site must be flawless on a phone. If not, you're ignoring a massive part of your audience.

Trust Issues: Is your site visibly secure? Do you have trust badges, social proof, or customer reviews? Small doubts cause big drop-offs.

Instead of getting lost in reports, get straight to the point. A conversational AI data analyst lets you skip the SQL and just ask.

Try asking Statspresso: "Show me the top 5 landing pages with the highest bounce rates for mobile traffic last month."

Within seconds, you have an actionable list. The AI might even surface an insight you hadn't considered, like a specific UTM campaign driving all that high-bounce traffic. Now you know exactly where your marketing spend is being wasted. This is how you move from being buried in data to making decisive, profitable actions.

4. Prioritizing Experiments for Maximum Impact

You’ve pinpointed the leaks and your team has a whiteboard full of ideas. Now what? You can’t test everything. Smart website conversion optimization isn't about running more tests; it's about running the right tests by placing smart, data-informed bets.

This is where most teams get stuck. A/B test the hero copy or the checkout flow? The button color or the pricing page? The answer isn't a gut feeling. It’s a calculated decision, and you need a framework.

From Guesswork to Smart Bets with PIE and ICE

Two of the most popular frameworks I’ve used are PIE and ICE. They’re simple, but they force you to get honest about your ideas.

PIE Framework: Scores each test idea on three criteria: Potential (how much improvement can we expect?), Importance (how valuable is the traffic on this page?), and Ease (how many resources will it take?).

ICE Framework: A close cousin, this one scores ideas on Impact (similar to Potential), Confidence (how sure are we this will work?), and Ease.

Think of these as your starting line. The magic happens when you bring qualitative insights into the mix. Your analytics tell you what is happening, but only your users can tell you why.

I’ve seen teams spend months debating a homepage redesign, only to discover from customer interviews that the real conversion killer was a confusing discount code field. Qualitative feedback is your fastest path to a winning hypothesis.

Marrying Quantitative Data with Qualitative Insights

Let's say your analytics show a 40% drop-off at the payment step. That’s the "what." Now, dig for the "why."

On-Page Surveys: Use a tool like Hotjar to ask users on the page a simple question: "Is there anything preventing you from making a purchase today?"

User Session Recordings: Watch recordings of real user sessions on pages with high drop-off rates. You'll be amazed at what you see.

Customer Interviews: Talk to recent customers. A golden question: "Was there any point where you almost didn't complete your purchase? What was that?"

This approach turns "I think we should change the button color" into a powerful, data-backed hypothesis: "Our data shows a massive drop-off on mobile at the payment step, and user feedback points to security confusion. Let's test adding trust badges and a mobile-optimized payment button."

See the difference? Now you have a hypothesis grounded in both quantitative and qualitative evidence.

Of course, digging up data can be a bottleneck. This is where a conversational AI data analyst like Statspresso changes the game. Instead of waiting days, validate your hunches in seconds.

Try asking Statspresso: "Compare conversion rates for users who interact with the promo code field versus those who don't."

You get an immediate signal. This speed lets you prioritize with far more confidence.

Experiment Prioritization: The Old Way vs. The Statspresso Way

Phase | The Old Way (Manual SQL) | The New Way (Statspresso) |

|---|---|---|

Idea Generation | Brainstorming in a spreadsheet, based on opinion. | Ideas sparked by AI-surfaced insights from your actual data. |

Data Validation | Submit a ticket to the data team; wait days (or weeks). | Ask a plain-English question and get a funnel analysis chart in seconds. |

Prioritization | Score ideas in a PIE/ICE framework using outdated data and guesswork. | Score ideas using live, accurate data retrieved instantly. |

Final Decision | Debate priorities in a long meeting. | Make a quick, data-backed decision and design the experiment. |

When you automate the data-gathering, your team is free to focus on what humans do best: understanding the user and designing creative experiments. This is how you start making changes that deliver a real return.

Designing and Analyzing Tests Like a Pro

Anyone can launch an A/B test. The challenge is running a good one. It's easy to declare a winner based on statistical noise or roll out a change that torpedoes another key metric.

This is where you learn to test with confidence. Getting the fundamentals right is everything. You need a rock-solid hypothesis, a grasp of statistical significance, and the discipline to let a test run without peeking. But first, pick the right tool for the job.

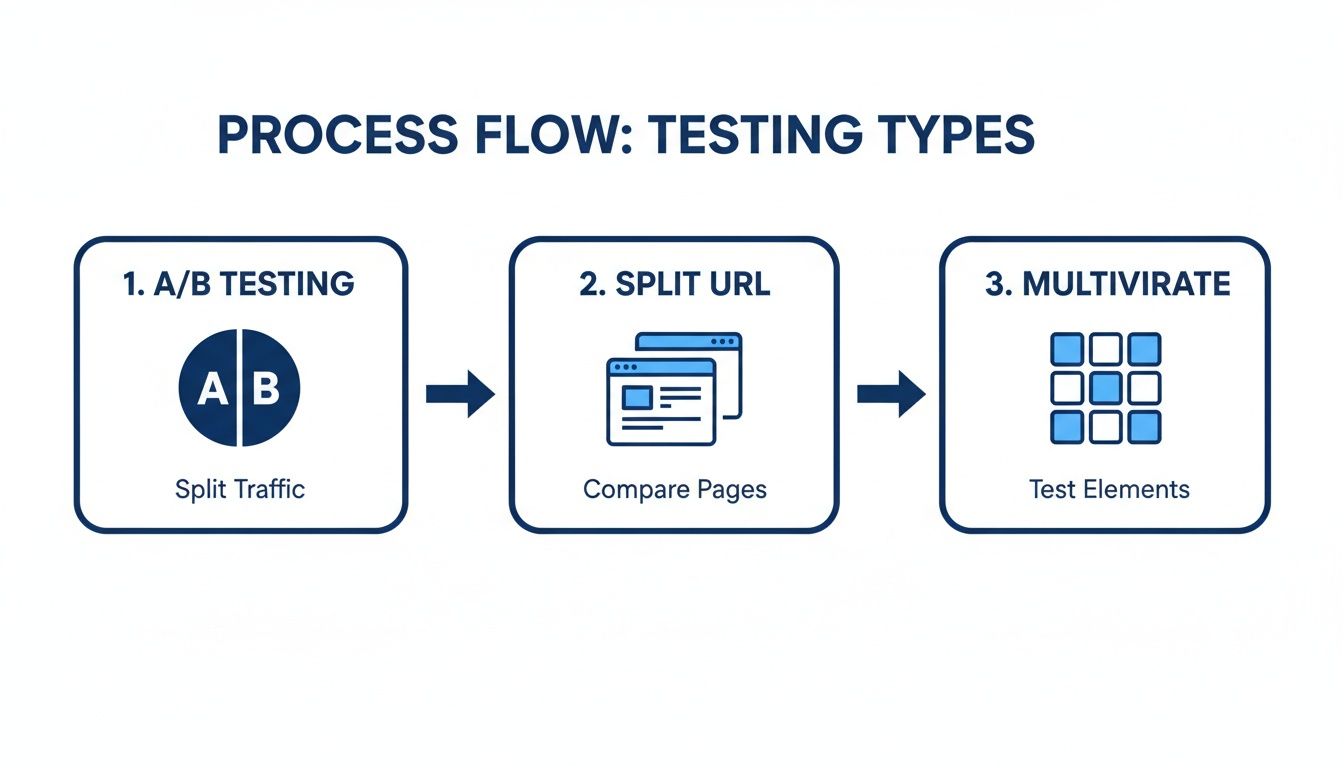

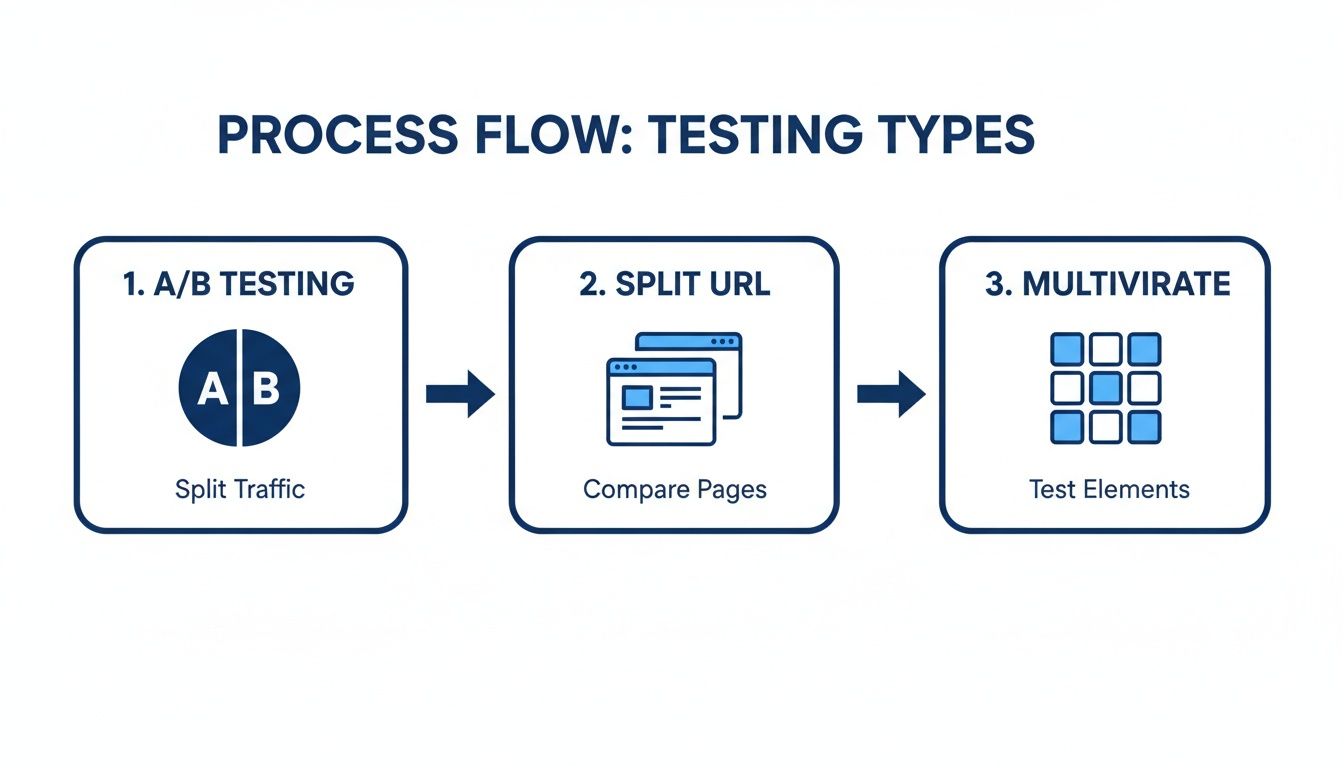

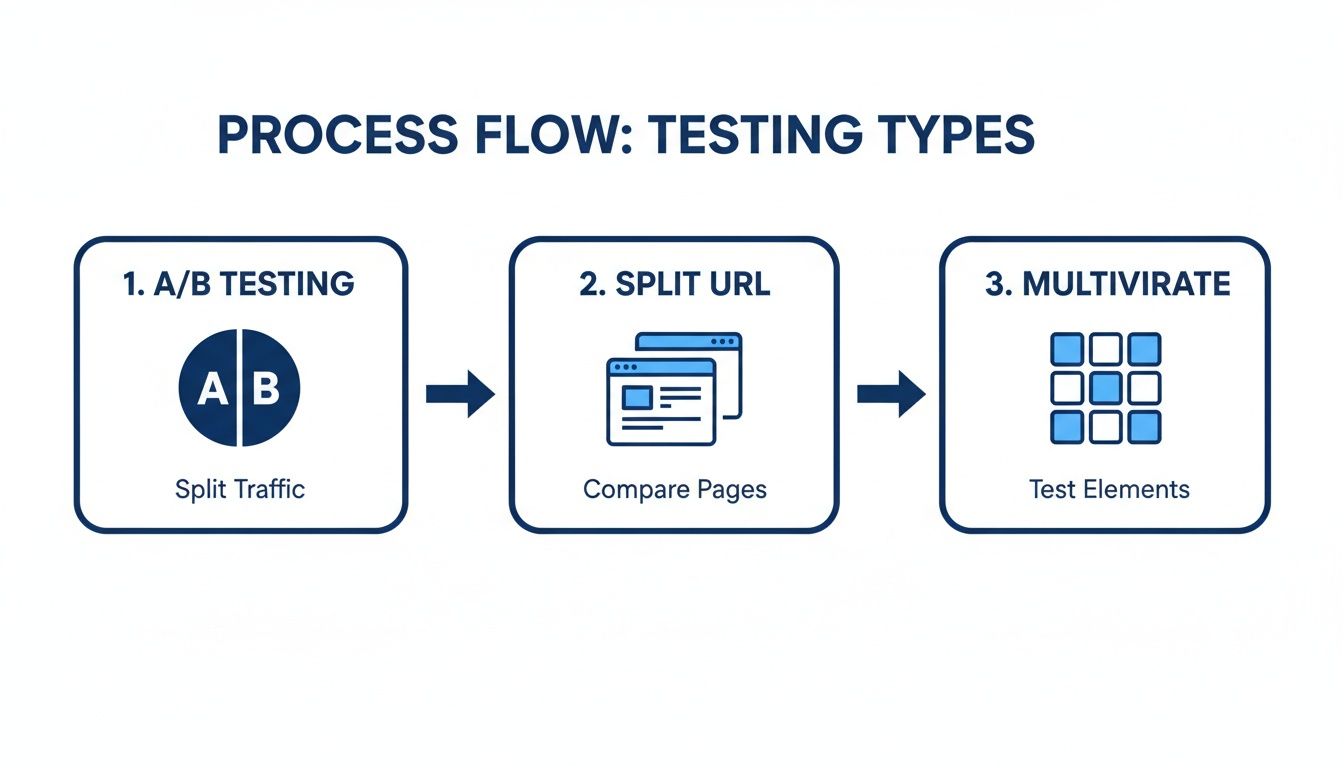

A/B, Split URL, or Multivariate Testing

These terms aren't interchangeable. Each is a different approach to website conversion optimization, and knowing which to use is half the battle.

A/B Testing: Your everyday workhorse. Pit one new version ("variant") against your current page ("control"). It's simple, direct, and best for testing a focused change.

Split URL Testing: Think of this as A/B testing for major surgery. You're testing two completely different pages, each on its own URL. Go-to for big page redesigns.

Multivariate Testing (MVT): The advanced stuff. Use this to test multiple changes at once to find the winning combination. MVT requires a massive amount of traffic to work.

My advice? Most teams should stick with A/B testing. It's the fastest path to clear, reliable results. Save MVT for when your testing program is more mature.

Segment Your Results to Find the Real Story

Here’s a tip that separates amateurs from experts: a winning test for one audience can be a flop for another. A change that resonates with mobile users might fall flat with your desktop crowd. Segmenting your results is essential.

Data backs this up. The average website conversion rate is around 2.9%. But experienced optimizers know direct traffic typically converts best at 3.3%. A 2026 industry report shows that users who type your URL directly outperform visitors from email (2.5%), organic search (2.7%), and social media (1.5%). This is a key finding from conversational analytics.

Herein lies the opportunity. You might run a test that shows a "flat" result overall. But when you dig in, you could discover it was a huge success with organic search visitors but a failure with direct visitors. Without segmenting, you'd throw out a valuable insight.

The old way of finding these nuggets meant hours in Google Analytics or waiting for an analyst. Now, you can just ask. A conversational AI data analyst like Statspresso lets you skip the tedious work.

Connect data sources like your Postgres database and analytics tools, then simply ask a question. Get a chart in seconds.

Try asking Statspresso: "Compare conversion rates for direct vs. organic search traffic over the last 90 days."

Getting this data instantly allows you to segment every test result. You're no longer just finding a winner; you're understanding why it won and for whom.

Understanding Statistical Significance

Finally, the math. Statistical significance measures how confident you can be that your result isn't a random fluke. You'll see this as a "p-value" or a "confidence level."

Most CRO pros aim for a 95% confidence level. This means there's only a 5% probability the difference you're seeing happened by chance.

Don't stop a test just because one version is "winning" after a day. Let it run until you hit your pre-calculated sample size. Peeking early is the cardinal sin of A/B testing—the fastest way to fool yourself. For a deeper dive, our guide on how to interpret t-test results is an excellent start.

From Test to Rollout: The Final Mile

You ran a disciplined test, hit significance, and crowned a winner. Pop the champagne? Not so fast.

This is where many solid CRO programs stumble. Shipping the winning variation to 100% of users and immediately jumping to the next test is a classic mistake. The final stretch is about making sure your gains translate into lasting business impact.

Watch post-launch metrics like a hawk. Does the lift hold up? And just as critically, did you inadvertently break something else? A new headline that boosts sign-ups but kills long-term retention isn't a win. It's just moving your leaky bucket.

From Static Reports to Live Monitoring

Relying on a weekly report to check a new feature is like driving by looking only in the rearview mirror. You’re reacting to what’s already happened. You need a live, dynamic view of performance.

The ultimate goal is a powerful flywheel: test, learn, implement, repeat. Shortening this cycle is what separates teams that run occasional tests from true learning organizations.

Tools like a conversational AI data analyst give you this capability without tying up engineers. With Statspresso, you can spin up a live dashboard in minutes to monitor your new feature's vitals. This lets you spot unintended consequences before they snowball.

Let’s say you just pushed a new checkout flow live. Instead of waiting, simply ask:

Try asking Statspresso: "Show me the checkout completion rate and average order value for the last 7 days compared to the previous 7 days."

This immediate feedback loop is crucial. It’s the difference between quickly patching a small leak and dealing with a major flood.

While standard A/B testing is often the go-to, advanced methods like split URL or multivariate tests are invaluable for tackling complex optimization challenges before you even get to rollout.

Personalization: The Next Frontier

Once you've confirmed a change is a net positive, the game gets more interesting. The next move is personalization.

Your experiment results almost always contain hidden gems. You might find your new design was a massive success with mobile users from organic search but performed worse for desktop users from paid campaigns. This is gold.

Instead of a one-size-fits-all rollout, deliver truly relevant experiences. Ship the winning variation only to the audience segments it won with. This is how you evolve from basic A/B tests to a sophisticated optimization strategy.

By using Statspresso to skip the SQL and just ask your data questions, you can uncover these high-value segments in seconds. This speed turns an insight into an actionable personalization rule before the data gets cold, giving you a serious competitive edge in what we call GenBI, or Generative Business Intelligence.

Common CRO Questions I Hear All the Time

Even with a full playbook, a few questions always pop up. Let's tackle the most common ones I hear.

What Is a Good Conversion Rate?

This is the most-asked question, and the only honest answer is: it depends. You'll see articles throwing around an average website conversion rate of 2.9%, but that figure is useless without context.

An e-commerce store selling $20 t-shirts is playing a different game than a B2B SaaS company closing a $50,000 contract. Their rates will be, and should be, worlds apart. Forget benchmarks. The only number that matters is your own. Your goal is to consistently beat what you did last month.

How Long Should I Run an A/B Test?

Let the data decide. Run your test until you hit statistical significance, not for a pre-set number of days. A good rule of thumb is to run it for at least one or two full business cycles (e.g., two weeks) to smooth out daily traffic patterns.

The biggest mistake is stopping a test early just because one version is ahead. This is called "peeking," and it's a surefire way to get a false positive. Let the numbers mature.

How Much Traffic Do I Need for CRO?

While there isn't a magic number, more traffic helps you get to a significant result faster. If your site has low traffic—fewer than 5,000 unique visitors per month—traditional A/B testing can be painfully slow.

When traffic is low, swing for the fences. Test big, bold changes more likely to create a major impact:

Test a completely new value proposition.

Try a radically simplified landing page.

Lean heavily on qualitative feedback from user surveys and interviews to guide your big bets.

Can CRO Hurt My SEO?

When done right, absolutely not. Search engines like Google understand A/B testing.

Just follow best practices. Use the rel="canonical" tag to tell Google which page is the original and avoid "cloaking" (showing one version to users and a different one to search bots). In the long run, a better user experience often leads to higher engagement, which can give your SEO rankings an indirect boost.

Ultimately, the key to great website conversion optimization is getting fast, reliable answers from your data. Waiting for analysts is a massive bottleneck. A conversational AI data analyst like Statspresso lets you skip the SQL and get straight to the insights you need.

Ready to stop guessing? Connect your first data source to Statspresso for free and ask your first question. Get clear charts, not confusion, in just seconds. Find your answers here.

Getting traffic is easy. Turning it into revenue? That's the hard part. If your conversion rate feels painful, you're not alone. This is where website conversion optimization comes in. It’s the craft of turning more visitors into customers, whether that means making a purchase, signing up, or booking a demo. Waiting weeks for a data analyst to build a dashboard is a relic of the past. Let's fix your leaky revenue bucket, starting now.

TL;DR: Your Quick Guide to Better Conversions

Stop Guessing: Your website is leaking money. Use data to find the biggest cracks in your user experience first.

Ask, Don't Analyze: Ditch the slow, complex BI tools. A conversational AI data analyst like Statspresso lets you ask questions and get charts in seconds.

Prioritize Ruthlessly: You can't test everything. Use a framework like PIE or ICE to focus on experiments with the highest potential impact.

Segment Your Results: A test "winner" for one audience can be a loser for another. Dig into mobile vs. desktop, or new vs. returning users to find the real story.

Start Now: Connect your data to Statspresso. Skip the SQL. Just ask your data a question and get a chart in seconds.

Your Website Is Leaking Money. Let's Fix It.

Think of your unoptimized website as a leaky bucket. You pour traffic in the top, but potential revenue is constantly dripping out through cracks in the user experience. This guide isn't about throwing spaghetti at the wall or copying competitors. We're going through a disciplined, data-first approach to boosting your conversion rate.

The old way meant waiting weeks for an over-burdened analyst to build a dashboard. That's a relic. To stop the bleeding and seriously improve ecommerce conversion rate, you need to get smarter about turning your existing traffic into money.

Ditch the Dashboards and Start Asking Questions

Data should be your closest ally, but most teams are drowning in it. You've got sales numbers in Shopify, customer profiles in HubSpot, and product analytics locked away in a Postgres database. The problem? Getting simple answers feels impossible without writing SQL or begging your data team for a report.

This is where a conversational AI data analyst changes the game. Instead of filing a ticket and waiting, you can just ask a question and get an answer—with a chart—in seconds.

Key Takeaway: The point of conversion optimization isn't chasing small wins. It's building a system that constantly finds and plugs the biggest leaks in your revenue funnel. That system needs instant data access.

This modern approach means you skip the technical bottlenecks and get straight to insights. Imagine connecting your data and immediately having clarity to:

Pinpoint Friction: Find the exact step in your checkout where most users are dropping off.

Understand Behavior: See which marketing channels bring in your highest-value customers.

Validate Ideas: Get quick data to back up a hypothesis before building an A/B test.

With a tool like Statspresso, your conversational AI data analyst, you just ask questions in plain English. Instead of piecing together a complex report, just ask for what you need.

Try asking Statspresso: "Show me the conversion rate from 'add to cart' to 'purchase' over the last 60 days, broken down by device type."

This immediate feedback loop lets you move faster, make smarter decisions, and finally start plugging the leaks in your revenue bucket.

Setting Goals That Actually Matter for CRO

Before you run a single A/B test, let's talk goals. Countless CRO programs spin their wheels because they start with a vague wish instead of a concrete strategy. Saying you want "more conversions" isn't a strategy—it's a hope.

Real conversion optimization drives tangible business outcomes, not just a higher percentage on a dashboard. You have to move past vanity metrics and zero in on Key Performance Indicators (KPIs) that signal a healthier business. The goal isn't just to bump a number; it's to improve metrics with dollar signs attached.

Macro vs. Micro Conversions: The Big Wins and the Small Nudges

Not all user actions are equal. Understanding the difference is key.

Macro-conversions: These are your primary objectives, the actions that directly generate revenue. Think of a completed purchase, a signed enterprise contract, or a paid subscription. These are the home runs.

Micro-conversions: These are the smaller steps a user takes that show they're moving in the right direction. This could be signing up for a newsletter, downloading a case study, watching a product demo, or creating an account. They're leading indicators of future revenue.

It's a mistake to focus only on macro-conversions. A user who downloads a whitepaper (micro) might be the same person who signs a major contract three weeks later (macro). Tracking both gives you a richer understanding of the user journey.

Beyond Clicks to KPIs That Count

Your goals have to be tied to KPIs that reflect real customer value. A higher conversion rate on a low-margin product is fool's gold compared to a slightly lower rate that brings in high-value customers.

Shift your focus to goals connected to metrics like these:

Average Order Value (AOV): Are your experiments encouraging people to add more to their cart?

Customer Lifetime Value (CLV): Are you optimizing for one-time buyers or for loyal customers who come back?

Lead Quality Score: If you're in B2B, are your forms generating noise, or delivering leads your sales team is excited to call?

To establish a baseline, you first need to know where you stand. But pulling these numbers shouldn't require a data science degree. This is precisely where you skip the SQL and just ask your data a question.

This is where a tool like Statspresso, a conversational AI data analyst, comes in. It lets you get these baseline metrics without writing a single line of code. You connect your database, and you get immediate, chart-based answers.

Try asking Statspresso: "What was our average conversion rate for new users from the US last quarter?"

This instant access turns goal-setting from a guessing game into a data-informed process, ensuring every experiment has a clear purpose and a measurable impact.

Finding Where Your Conversion Funnel Leaks

Every conversion funnel has weak spots. Think of it less like a smooth slide and more like a leaky pipe. Your job is to find the biggest cracks first. This is where we stop guessing and start digging into the data to see exactly where users drop off.

You don't need to wrestle with CSV exports or clunky BI tools. The answers are in your data, whether it’s in Shopify, HubSpot, or a Postgres database. You just need to ask the right questions. A high bounce rate on a critical page is often the most obvious culprit—a dead end for potential revenue.

From Page Views to Page Problems

Before you plug leaks, you need to understand the path users are trying to take. Mapping their journey with a clear user flow diagram is a great start. With that map, your analytics will light up the problem spots—pages where visitors arrive, take one look, and hit the back button.

But this goes beyond bounce rate. You're looking for significant drop-offs between key steps:

Landing Page to Product Page: Are people clicking an ad and leaving immediately? Your ad message probably doesn't match the page.

Product Page to Add to Cart: If they view the product but never add it to their cart, your pricing, description, or images might be weak.

Cart to Checkout: This is a classic. A huge drop-off here almost always points to sticker shock from shipping costs or a clunky, untrustworthy cart page.

These aren't mysteries. They're specific, data-backed questions you can answer now.

Diagnosing the "Why" Behind the Drop-Off

Spotting a high bounce rate tells you what is happening. The real work is diagnosing the why. Is it a technical glitch? A design flaw? A messaging problem?

Bounce rates between 26% and 70% directly kill conversions. Keeping visitors engaged isn't a nice-to-have; it's a lifeline for your revenue. This is a core truth in website conversion optimization and a focus of my automated BI work.

What causes people to leave? It usually comes down to a few culprits:

Slow Page Load Speed: Anything over three seconds and you’re losing customers. Simple as that.

Confusing Value Proposition: If they can't figure out what you do in five seconds, they're gone.

Poor Mobile Experience: Your site must be flawless on a phone. If not, you're ignoring a massive part of your audience.

Trust Issues: Is your site visibly secure? Do you have trust badges, social proof, or customer reviews? Small doubts cause big drop-offs.

Instead of getting lost in reports, get straight to the point. A conversational AI data analyst lets you skip the SQL and just ask.

Try asking Statspresso: "Show me the top 5 landing pages with the highest bounce rates for mobile traffic last month."

Within seconds, you have an actionable list. The AI might even surface an insight you hadn't considered, like a specific UTM campaign driving all that high-bounce traffic. Now you know exactly where your marketing spend is being wasted. This is how you move from being buried in data to making decisive, profitable actions.

4. Prioritizing Experiments for Maximum Impact

You’ve pinpointed the leaks and your team has a whiteboard full of ideas. Now what? You can’t test everything. Smart website conversion optimization isn't about running more tests; it's about running the right tests by placing smart, data-informed bets.

This is where most teams get stuck. A/B test the hero copy or the checkout flow? The button color or the pricing page? The answer isn't a gut feeling. It’s a calculated decision, and you need a framework.

From Guesswork to Smart Bets with PIE and ICE

Two of the most popular frameworks I’ve used are PIE and ICE. They’re simple, but they force you to get honest about your ideas.

PIE Framework: Scores each test idea on three criteria: Potential (how much improvement can we expect?), Importance (how valuable is the traffic on this page?), and Ease (how many resources will it take?).

ICE Framework: A close cousin, this one scores ideas on Impact (similar to Potential), Confidence (how sure are we this will work?), and Ease.

Think of these as your starting line. The magic happens when you bring qualitative insights into the mix. Your analytics tell you what is happening, but only your users can tell you why.

I’ve seen teams spend months debating a homepage redesign, only to discover from customer interviews that the real conversion killer was a confusing discount code field. Qualitative feedback is your fastest path to a winning hypothesis.

Marrying Quantitative Data with Qualitative Insights

Let's say your analytics show a 40% drop-off at the payment step. That’s the "what." Now, dig for the "why."

On-Page Surveys: Use a tool like Hotjar to ask users on the page a simple question: "Is there anything preventing you from making a purchase today?"

User Session Recordings: Watch recordings of real user sessions on pages with high drop-off rates. You'll be amazed at what you see.

Customer Interviews: Talk to recent customers. A golden question: "Was there any point where you almost didn't complete your purchase? What was that?"

This approach turns "I think we should change the button color" into a powerful, data-backed hypothesis: "Our data shows a massive drop-off on mobile at the payment step, and user feedback points to security confusion. Let's test adding trust badges and a mobile-optimized payment button."

See the difference? Now you have a hypothesis grounded in both quantitative and qualitative evidence.

Of course, digging up data can be a bottleneck. This is where a conversational AI data analyst like Statspresso changes the game. Instead of waiting days, validate your hunches in seconds.

Try asking Statspresso: "Compare conversion rates for users who interact with the promo code field versus those who don't."

You get an immediate signal. This speed lets you prioritize with far more confidence.

Experiment Prioritization: The Old Way vs. The Statspresso Way

Phase | The Old Way (Manual SQL) | The New Way (Statspresso) |

|---|---|---|

Idea Generation | Brainstorming in a spreadsheet, based on opinion. | Ideas sparked by AI-surfaced insights from your actual data. |

Data Validation | Submit a ticket to the data team; wait days (or weeks). | Ask a plain-English question and get a funnel analysis chart in seconds. |

Prioritization | Score ideas in a PIE/ICE framework using outdated data and guesswork. | Score ideas using live, accurate data retrieved instantly. |

Final Decision | Debate priorities in a long meeting. | Make a quick, data-backed decision and design the experiment. |

When you automate the data-gathering, your team is free to focus on what humans do best: understanding the user and designing creative experiments. This is how you start making changes that deliver a real return.

Designing and Analyzing Tests Like a Pro

Anyone can launch an A/B test. The challenge is running a good one. It's easy to declare a winner based on statistical noise or roll out a change that torpedoes another key metric.

This is where you learn to test with confidence. Getting the fundamentals right is everything. You need a rock-solid hypothesis, a grasp of statistical significance, and the discipline to let a test run without peeking. But first, pick the right tool for the job.

A/B, Split URL, or Multivariate Testing

These terms aren't interchangeable. Each is a different approach to website conversion optimization, and knowing which to use is half the battle.

A/B Testing: Your everyday workhorse. Pit one new version ("variant") against your current page ("control"). It's simple, direct, and best for testing a focused change.

Split URL Testing: Think of this as A/B testing for major surgery. You're testing two completely different pages, each on its own URL. Go-to for big page redesigns.

Multivariate Testing (MVT): The advanced stuff. Use this to test multiple changes at once to find the winning combination. MVT requires a massive amount of traffic to work.

My advice? Most teams should stick with A/B testing. It's the fastest path to clear, reliable results. Save MVT for when your testing program is more mature.

Segment Your Results to Find the Real Story

Here’s a tip that separates amateurs from experts: a winning test for one audience can be a flop for another. A change that resonates with mobile users might fall flat with your desktop crowd. Segmenting your results is essential.

Data backs this up. The average website conversion rate is around 2.9%. But experienced optimizers know direct traffic typically converts best at 3.3%. A 2026 industry report shows that users who type your URL directly outperform visitors from email (2.5%), organic search (2.7%), and social media (1.5%). This is a key finding from conversational analytics.

Herein lies the opportunity. You might run a test that shows a "flat" result overall. But when you dig in, you could discover it was a huge success with organic search visitors but a failure with direct visitors. Without segmenting, you'd throw out a valuable insight.

The old way of finding these nuggets meant hours in Google Analytics or waiting for an analyst. Now, you can just ask. A conversational AI data analyst like Statspresso lets you skip the tedious work.

Connect data sources like your Postgres database and analytics tools, then simply ask a question. Get a chart in seconds.

Try asking Statspresso: "Compare conversion rates for direct vs. organic search traffic over the last 90 days."

Getting this data instantly allows you to segment every test result. You're no longer just finding a winner; you're understanding why it won and for whom.

Understanding Statistical Significance

Finally, the math. Statistical significance measures how confident you can be that your result isn't a random fluke. You'll see this as a "p-value" or a "confidence level."

Most CRO pros aim for a 95% confidence level. This means there's only a 5% probability the difference you're seeing happened by chance.

Don't stop a test just because one version is "winning" after a day. Let it run until you hit your pre-calculated sample size. Peeking early is the cardinal sin of A/B testing—the fastest way to fool yourself. For a deeper dive, our guide on how to interpret t-test results is an excellent start.

From Test to Rollout: The Final Mile

You ran a disciplined test, hit significance, and crowned a winner. Pop the champagne? Not so fast.

This is where many solid CRO programs stumble. Shipping the winning variation to 100% of users and immediately jumping to the next test is a classic mistake. The final stretch is about making sure your gains translate into lasting business impact.

Watch post-launch metrics like a hawk. Does the lift hold up? And just as critically, did you inadvertently break something else? A new headline that boosts sign-ups but kills long-term retention isn't a win. It's just moving your leaky bucket.

From Static Reports to Live Monitoring

Relying on a weekly report to check a new feature is like driving by looking only in the rearview mirror. You’re reacting to what’s already happened. You need a live, dynamic view of performance.

The ultimate goal is a powerful flywheel: test, learn, implement, repeat. Shortening this cycle is what separates teams that run occasional tests from true learning organizations.

Tools like a conversational AI data analyst give you this capability without tying up engineers. With Statspresso, you can spin up a live dashboard in minutes to monitor your new feature's vitals. This lets you spot unintended consequences before they snowball.

Let’s say you just pushed a new checkout flow live. Instead of waiting, simply ask:

Try asking Statspresso: "Show me the checkout completion rate and average order value for the last 7 days compared to the previous 7 days."

This immediate feedback loop is crucial. It’s the difference between quickly patching a small leak and dealing with a major flood.

While standard A/B testing is often the go-to, advanced methods like split URL or multivariate tests are invaluable for tackling complex optimization challenges before you even get to rollout.

Personalization: The Next Frontier

Once you've confirmed a change is a net positive, the game gets more interesting. The next move is personalization.

Your experiment results almost always contain hidden gems. You might find your new design was a massive success with mobile users from organic search but performed worse for desktop users from paid campaigns. This is gold.

Instead of a one-size-fits-all rollout, deliver truly relevant experiences. Ship the winning variation only to the audience segments it won with. This is how you evolve from basic A/B tests to a sophisticated optimization strategy.

By using Statspresso to skip the SQL and just ask your data questions, you can uncover these high-value segments in seconds. This speed turns an insight into an actionable personalization rule before the data gets cold, giving you a serious competitive edge in what we call GenBI, or Generative Business Intelligence.

Common CRO Questions I Hear All the Time

Even with a full playbook, a few questions always pop up. Let's tackle the most common ones I hear.

What Is a Good Conversion Rate?

This is the most-asked question, and the only honest answer is: it depends. You'll see articles throwing around an average website conversion rate of 2.9%, but that figure is useless without context.

An e-commerce store selling $20 t-shirts is playing a different game than a B2B SaaS company closing a $50,000 contract. Their rates will be, and should be, worlds apart. Forget benchmarks. The only number that matters is your own. Your goal is to consistently beat what you did last month.

How Long Should I Run an A/B Test?

Let the data decide. Run your test until you hit statistical significance, not for a pre-set number of days. A good rule of thumb is to run it for at least one or two full business cycles (e.g., two weeks) to smooth out daily traffic patterns.

The biggest mistake is stopping a test early just because one version is ahead. This is called "peeking," and it's a surefire way to get a false positive. Let the numbers mature.

How Much Traffic Do I Need for CRO?

While there isn't a magic number, more traffic helps you get to a significant result faster. If your site has low traffic—fewer than 5,000 unique visitors per month—traditional A/B testing can be painfully slow.

When traffic is low, swing for the fences. Test big, bold changes more likely to create a major impact:

Test a completely new value proposition.

Try a radically simplified landing page.

Lean heavily on qualitative feedback from user surveys and interviews to guide your big bets.

Can CRO Hurt My SEO?

When done right, absolutely not. Search engines like Google understand A/B testing.

Just follow best practices. Use the rel="canonical" tag to tell Google which page is the original and avoid "cloaking" (showing one version to users and a different one to search bots). In the long run, a better user experience often leads to higher engagement, which can give your SEO rankings an indirect boost.

Ultimately, the key to great website conversion optimization is getting fast, reliable answers from your data. Waiting for analysts is a massive bottleneck. A conversational AI data analyst like Statspresso lets you skip the SQL and get straight to the insights you need.

Ready to stop guessing? Connect your first data source to Statspresso for free and ask your first question. Get clear charts, not confusion, in just seconds. Find your answers here.

Getting traffic is easy. Turning it into revenue? That's the hard part. If your conversion rate feels painful, you're not alone. This is where website conversion optimization comes in. It’s the craft of turning more visitors into customers, whether that means making a purchase, signing up, or booking a demo. Waiting weeks for a data analyst to build a dashboard is a relic of the past. Let's fix your leaky revenue bucket, starting now.

TL;DR: Your Quick Guide to Better Conversions

Stop Guessing: Your website is leaking money. Use data to find the biggest cracks in your user experience first.

Ask, Don't Analyze: Ditch the slow, complex BI tools. A conversational AI data analyst like Statspresso lets you ask questions and get charts in seconds.

Prioritize Ruthlessly: You can't test everything. Use a framework like PIE or ICE to focus on experiments with the highest potential impact.

Segment Your Results: A test "winner" for one audience can be a loser for another. Dig into mobile vs. desktop, or new vs. returning users to find the real story.

Start Now: Connect your data to Statspresso. Skip the SQL. Just ask your data a question and get a chart in seconds.

Your Website Is Leaking Money. Let's Fix It.

Think of your unoptimized website as a leaky bucket. You pour traffic in the top, but potential revenue is constantly dripping out through cracks in the user experience. This guide isn't about throwing spaghetti at the wall or copying competitors. We're going through a disciplined, data-first approach to boosting your conversion rate.

The old way meant waiting weeks for an over-burdened analyst to build a dashboard. That's a relic. To stop the bleeding and seriously improve ecommerce conversion rate, you need to get smarter about turning your existing traffic into money.

Ditch the Dashboards and Start Asking Questions

Data should be your closest ally, but most teams are drowning in it. You've got sales numbers in Shopify, customer profiles in HubSpot, and product analytics locked away in a Postgres database. The problem? Getting simple answers feels impossible without writing SQL or begging your data team for a report.

This is where a conversational AI data analyst changes the game. Instead of filing a ticket and waiting, you can just ask a question and get an answer—with a chart—in seconds.

Key Takeaway: The point of conversion optimization isn't chasing small wins. It's building a system that constantly finds and plugs the biggest leaks in your revenue funnel. That system needs instant data access.

This modern approach means you skip the technical bottlenecks and get straight to insights. Imagine connecting your data and immediately having clarity to:

Pinpoint Friction: Find the exact step in your checkout where most users are dropping off.

Understand Behavior: See which marketing channels bring in your highest-value customers.

Validate Ideas: Get quick data to back up a hypothesis before building an A/B test.

With a tool like Statspresso, your conversational AI data analyst, you just ask questions in plain English. Instead of piecing together a complex report, just ask for what you need.

Try asking Statspresso: "Show me the conversion rate from 'add to cart' to 'purchase' over the last 60 days, broken down by device type."

This immediate feedback loop lets you move faster, make smarter decisions, and finally start plugging the leaks in your revenue bucket.

Setting Goals That Actually Matter for CRO

Before you run a single A/B test, let's talk goals. Countless CRO programs spin their wheels because they start with a vague wish instead of a concrete strategy. Saying you want "more conversions" isn't a strategy—it's a hope.

Real conversion optimization drives tangible business outcomes, not just a higher percentage on a dashboard. You have to move past vanity metrics and zero in on Key Performance Indicators (KPIs) that signal a healthier business. The goal isn't just to bump a number; it's to improve metrics with dollar signs attached.

Macro vs. Micro Conversions: The Big Wins and the Small Nudges

Not all user actions are equal. Understanding the difference is key.

Macro-conversions: These are your primary objectives, the actions that directly generate revenue. Think of a completed purchase, a signed enterprise contract, or a paid subscription. These are the home runs.

Micro-conversions: These are the smaller steps a user takes that show they're moving in the right direction. This could be signing up for a newsletter, downloading a case study, watching a product demo, or creating an account. They're leading indicators of future revenue.

It's a mistake to focus only on macro-conversions. A user who downloads a whitepaper (micro) might be the same person who signs a major contract three weeks later (macro). Tracking both gives you a richer understanding of the user journey.

Beyond Clicks to KPIs That Count

Your goals have to be tied to KPIs that reflect real customer value. A higher conversion rate on a low-margin product is fool's gold compared to a slightly lower rate that brings in high-value customers.

Shift your focus to goals connected to metrics like these:

Average Order Value (AOV): Are your experiments encouraging people to add more to their cart?

Customer Lifetime Value (CLV): Are you optimizing for one-time buyers or for loyal customers who come back?

Lead Quality Score: If you're in B2B, are your forms generating noise, or delivering leads your sales team is excited to call?

To establish a baseline, you first need to know where you stand. But pulling these numbers shouldn't require a data science degree. This is precisely where you skip the SQL and just ask your data a question.

This is where a tool like Statspresso, a conversational AI data analyst, comes in. It lets you get these baseline metrics without writing a single line of code. You connect your database, and you get immediate, chart-based answers.

Try asking Statspresso: "What was our average conversion rate for new users from the US last quarter?"

This instant access turns goal-setting from a guessing game into a data-informed process, ensuring every experiment has a clear purpose and a measurable impact.

Finding Where Your Conversion Funnel Leaks

Every conversion funnel has weak spots. Think of it less like a smooth slide and more like a leaky pipe. Your job is to find the biggest cracks first. This is where we stop guessing and start digging into the data to see exactly where users drop off.

You don't need to wrestle with CSV exports or clunky BI tools. The answers are in your data, whether it’s in Shopify, HubSpot, or a Postgres database. You just need to ask the right questions. A high bounce rate on a critical page is often the most obvious culprit—a dead end for potential revenue.

From Page Views to Page Problems

Before you plug leaks, you need to understand the path users are trying to take. Mapping their journey with a clear user flow diagram is a great start. With that map, your analytics will light up the problem spots—pages where visitors arrive, take one look, and hit the back button.

But this goes beyond bounce rate. You're looking for significant drop-offs between key steps:

Landing Page to Product Page: Are people clicking an ad and leaving immediately? Your ad message probably doesn't match the page.

Product Page to Add to Cart: If they view the product but never add it to their cart, your pricing, description, or images might be weak.

Cart to Checkout: This is a classic. A huge drop-off here almost always points to sticker shock from shipping costs or a clunky, untrustworthy cart page.

These aren't mysteries. They're specific, data-backed questions you can answer now.

Diagnosing the "Why" Behind the Drop-Off

Spotting a high bounce rate tells you what is happening. The real work is diagnosing the why. Is it a technical glitch? A design flaw? A messaging problem?

Bounce rates between 26% and 70% directly kill conversions. Keeping visitors engaged isn't a nice-to-have; it's a lifeline for your revenue. This is a core truth in website conversion optimization and a focus of my automated BI work.

What causes people to leave? It usually comes down to a few culprits:

Slow Page Load Speed: Anything over three seconds and you’re losing customers. Simple as that.

Confusing Value Proposition: If they can't figure out what you do in five seconds, they're gone.

Poor Mobile Experience: Your site must be flawless on a phone. If not, you're ignoring a massive part of your audience.

Trust Issues: Is your site visibly secure? Do you have trust badges, social proof, or customer reviews? Small doubts cause big drop-offs.

Instead of getting lost in reports, get straight to the point. A conversational AI data analyst lets you skip the SQL and just ask.

Try asking Statspresso: "Show me the top 5 landing pages with the highest bounce rates for mobile traffic last month."

Within seconds, you have an actionable list. The AI might even surface an insight you hadn't considered, like a specific UTM campaign driving all that high-bounce traffic. Now you know exactly where your marketing spend is being wasted. This is how you move from being buried in data to making decisive, profitable actions.

4. Prioritizing Experiments for Maximum Impact

You’ve pinpointed the leaks and your team has a whiteboard full of ideas. Now what? You can’t test everything. Smart website conversion optimization isn't about running more tests; it's about running the right tests by placing smart, data-informed bets.

This is where most teams get stuck. A/B test the hero copy or the checkout flow? The button color or the pricing page? The answer isn't a gut feeling. It’s a calculated decision, and you need a framework.

From Guesswork to Smart Bets with PIE and ICE

Two of the most popular frameworks I’ve used are PIE and ICE. They’re simple, but they force you to get honest about your ideas.

PIE Framework: Scores each test idea on three criteria: Potential (how much improvement can we expect?), Importance (how valuable is the traffic on this page?), and Ease (how many resources will it take?).

ICE Framework: A close cousin, this one scores ideas on Impact (similar to Potential), Confidence (how sure are we this will work?), and Ease.

Think of these as your starting line. The magic happens when you bring qualitative insights into the mix. Your analytics tell you what is happening, but only your users can tell you why.

I’ve seen teams spend months debating a homepage redesign, only to discover from customer interviews that the real conversion killer was a confusing discount code field. Qualitative feedback is your fastest path to a winning hypothesis.

Marrying Quantitative Data with Qualitative Insights

Let's say your analytics show a 40% drop-off at the payment step. That’s the "what." Now, dig for the "why."

On-Page Surveys: Use a tool like Hotjar to ask users on the page a simple question: "Is there anything preventing you from making a purchase today?"

User Session Recordings: Watch recordings of real user sessions on pages with high drop-off rates. You'll be amazed at what you see.

Customer Interviews: Talk to recent customers. A golden question: "Was there any point where you almost didn't complete your purchase? What was that?"

This approach turns "I think we should change the button color" into a powerful, data-backed hypothesis: "Our data shows a massive drop-off on mobile at the payment step, and user feedback points to security confusion. Let's test adding trust badges and a mobile-optimized payment button."

See the difference? Now you have a hypothesis grounded in both quantitative and qualitative evidence.

Of course, digging up data can be a bottleneck. This is where a conversational AI data analyst like Statspresso changes the game. Instead of waiting days, validate your hunches in seconds.

Try asking Statspresso: "Compare conversion rates for users who interact with the promo code field versus those who don't."

You get an immediate signal. This speed lets you prioritize with far more confidence.

Experiment Prioritization: The Old Way vs. The Statspresso Way

Phase | The Old Way (Manual SQL) | The New Way (Statspresso) |

|---|---|---|

Idea Generation | Brainstorming in a spreadsheet, based on opinion. | Ideas sparked by AI-surfaced insights from your actual data. |

Data Validation | Submit a ticket to the data team; wait days (or weeks). | Ask a plain-English question and get a funnel analysis chart in seconds. |

Prioritization | Score ideas in a PIE/ICE framework using outdated data and guesswork. | Score ideas using live, accurate data retrieved instantly. |

Final Decision | Debate priorities in a long meeting. | Make a quick, data-backed decision and design the experiment. |

When you automate the data-gathering, your team is free to focus on what humans do best: understanding the user and designing creative experiments. This is how you start making changes that deliver a real return.

Designing and Analyzing Tests Like a Pro

Anyone can launch an A/B test. The challenge is running a good one. It's easy to declare a winner based on statistical noise or roll out a change that torpedoes another key metric.

This is where you learn to test with confidence. Getting the fundamentals right is everything. You need a rock-solid hypothesis, a grasp of statistical significance, and the discipline to let a test run without peeking. But first, pick the right tool for the job.

A/B, Split URL, or Multivariate Testing

These terms aren't interchangeable. Each is a different approach to website conversion optimization, and knowing which to use is half the battle.

A/B Testing: Your everyday workhorse. Pit one new version ("variant") against your current page ("control"). It's simple, direct, and best for testing a focused change.

Split URL Testing: Think of this as A/B testing for major surgery. You're testing two completely different pages, each on its own URL. Go-to for big page redesigns.

Multivariate Testing (MVT): The advanced stuff. Use this to test multiple changes at once to find the winning combination. MVT requires a massive amount of traffic to work.

My advice? Most teams should stick with A/B testing. It's the fastest path to clear, reliable results. Save MVT for when your testing program is more mature.

Segment Your Results to Find the Real Story

Here’s a tip that separates amateurs from experts: a winning test for one audience can be a flop for another. A change that resonates with mobile users might fall flat with your desktop crowd. Segmenting your results is essential.

Data backs this up. The average website conversion rate is around 2.9%. But experienced optimizers know direct traffic typically converts best at 3.3%. A 2026 industry report shows that users who type your URL directly outperform visitors from email (2.5%), organic search (2.7%), and social media (1.5%). This is a key finding from conversational analytics.

Herein lies the opportunity. You might run a test that shows a "flat" result overall. But when you dig in, you could discover it was a huge success with organic search visitors but a failure with direct visitors. Without segmenting, you'd throw out a valuable insight.

The old way of finding these nuggets meant hours in Google Analytics or waiting for an analyst. Now, you can just ask. A conversational AI data analyst like Statspresso lets you skip the tedious work.

Connect data sources like your Postgres database and analytics tools, then simply ask a question. Get a chart in seconds.

Try asking Statspresso: "Compare conversion rates for direct vs. organic search traffic over the last 90 days."

Getting this data instantly allows you to segment every test result. You're no longer just finding a winner; you're understanding why it won and for whom.

Understanding Statistical Significance

Finally, the math. Statistical significance measures how confident you can be that your result isn't a random fluke. You'll see this as a "p-value" or a "confidence level."

Most CRO pros aim for a 95% confidence level. This means there's only a 5% probability the difference you're seeing happened by chance.

Don't stop a test just because one version is "winning" after a day. Let it run until you hit your pre-calculated sample size. Peeking early is the cardinal sin of A/B testing—the fastest way to fool yourself. For a deeper dive, our guide on how to interpret t-test results is an excellent start.

From Test to Rollout: The Final Mile

You ran a disciplined test, hit significance, and crowned a winner. Pop the champagne? Not so fast.

This is where many solid CRO programs stumble. Shipping the winning variation to 100% of users and immediately jumping to the next test is a classic mistake. The final stretch is about making sure your gains translate into lasting business impact.

Watch post-launch metrics like a hawk. Does the lift hold up? And just as critically, did you inadvertently break something else? A new headline that boosts sign-ups but kills long-term retention isn't a win. It's just moving your leaky bucket.

From Static Reports to Live Monitoring

Relying on a weekly report to check a new feature is like driving by looking only in the rearview mirror. You’re reacting to what’s already happened. You need a live, dynamic view of performance.

The ultimate goal is a powerful flywheel: test, learn, implement, repeat. Shortening this cycle is what separates teams that run occasional tests from true learning organizations.

Tools like a conversational AI data analyst give you this capability without tying up engineers. With Statspresso, you can spin up a live dashboard in minutes to monitor your new feature's vitals. This lets you spot unintended consequences before they snowball.

Let’s say you just pushed a new checkout flow live. Instead of waiting, simply ask:

Try asking Statspresso: "Show me the checkout completion rate and average order value for the last 7 days compared to the previous 7 days."

This immediate feedback loop is crucial. It’s the difference between quickly patching a small leak and dealing with a major flood.

While standard A/B testing is often the go-to, advanced methods like split URL or multivariate tests are invaluable for tackling complex optimization challenges before you even get to rollout.

Personalization: The Next Frontier

Once you've confirmed a change is a net positive, the game gets more interesting. The next move is personalization.

Your experiment results almost always contain hidden gems. You might find your new design was a massive success with mobile users from organic search but performed worse for desktop users from paid campaigns. This is gold.

Instead of a one-size-fits-all rollout, deliver truly relevant experiences. Ship the winning variation only to the audience segments it won with. This is how you evolve from basic A/B tests to a sophisticated optimization strategy.

By using Statspresso to skip the SQL and just ask your data questions, you can uncover these high-value segments in seconds. This speed turns an insight into an actionable personalization rule before the data gets cold, giving you a serious competitive edge in what we call GenBI, or Generative Business Intelligence.

Common CRO Questions I Hear All the Time

Even with a full playbook, a few questions always pop up. Let's tackle the most common ones I hear.

What Is a Good Conversion Rate?

This is the most-asked question, and the only honest answer is: it depends. You'll see articles throwing around an average website conversion rate of 2.9%, but that figure is useless without context.

An e-commerce store selling $20 t-shirts is playing a different game than a B2B SaaS company closing a $50,000 contract. Their rates will be, and should be, worlds apart. Forget benchmarks. The only number that matters is your own. Your goal is to consistently beat what you did last month.

How Long Should I Run an A/B Test?

Let the data decide. Run your test until you hit statistical significance, not for a pre-set number of days. A good rule of thumb is to run it for at least one or two full business cycles (e.g., two weeks) to smooth out daily traffic patterns.

The biggest mistake is stopping a test early just because one version is ahead. This is called "peeking," and it's a surefire way to get a false positive. Let the numbers mature.

How Much Traffic Do I Need for CRO?

While there isn't a magic number, more traffic helps you get to a significant result faster. If your site has low traffic—fewer than 5,000 unique visitors per month—traditional A/B testing can be painfully slow.

When traffic is low, swing for the fences. Test big, bold changes more likely to create a major impact:

Test a completely new value proposition.

Try a radically simplified landing page.

Lean heavily on qualitative feedback from user surveys and interviews to guide your big bets.

Can CRO Hurt My SEO?

When done right, absolutely not. Search engines like Google understand A/B testing.

Just follow best practices. Use the rel="canonical" tag to tell Google which page is the original and avoid "cloaking" (showing one version to users and a different one to search bots). In the long run, a better user experience often leads to higher engagement, which can give your SEO rankings an indirect boost.

Ultimately, the key to great website conversion optimization is getting fast, reliable answers from your data. Waiting for analysts is a massive bottleneck. A conversational AI data analyst like Statspresso lets you skip the SQL and get straight to the insights you need.

Ready to stop guessing? Connect your first data source to Statspresso for free and ask your first question. Get clear charts, not confusion, in just seconds. Find your answers here.