Startup Data Analysis: A Founder's No-SQL Growth Guide

You’ve got questions piling up right now. Which channel is bringing customers who stick? Where is churn starting? Why did conversion dip after the last release? The answers exist, but they’re trapped in Postgres, HubSpot, Shopify, Stripe, spreadsheets, and one overworked analyst’s queue.

That bottleneck isn’t normal. It’s just old. Waiting two weeks for a dashboard that’s already stale by the time it lands is a relic. Startup data analysis should mean fast answers to urgent business questions, not tickets, exports, and SQL archaeology.

Stop Waiting for Data and Start Making Decisions

Most founders treat slow analytics like bad weather. Annoying, but unavoidable. It isn’t.

The cost of delay is brutal in startups because the margin for error is tiny. About 90% of startups fail overall, and the leading causes map directly to missing or late analysis: 42% fail because there’s no market need, and 29% run out of funding according to Exploding Topics startup statistics. If you learn too slowly, you validate the wrong thing, spend on the wrong thing, or keep a weak motion alive for too long.

That’s why I get impatient with the old playbook. A founder asks for a retention breakdown. Someone exports CSVs. Someone else writes SQL. A chart appears after three rounds of clarification. By then, the product team has already shipped something else and marketing has changed the campaign setup.

Practical rule: If your answer arrives after the decision window closed, you don’t have analytics. You have reporting.

The better model is simple. Ask a business question in plain English. Let the system translate that into the query, pull the data, and return a chart or number immediately. That is startup data analysis in practice. Not a museum exhibit of dashboards no one trusts.

A lot of teams still assume self-serve analytics means teaching everyone SQL or buying a bigger BI tool. It usually means the opposite. Fewer layers. Fewer handoffs. Less ceremony between question and answer.

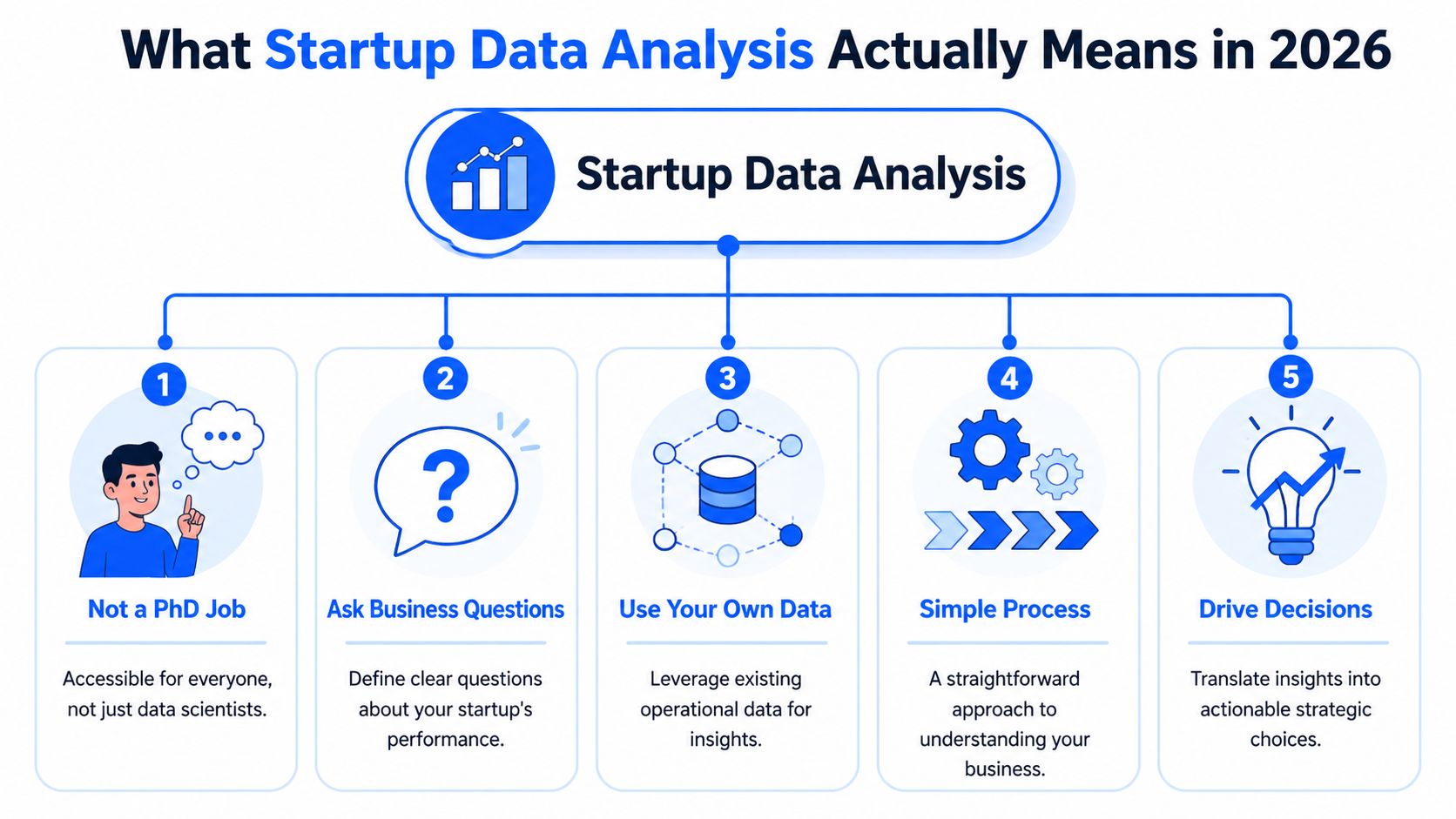

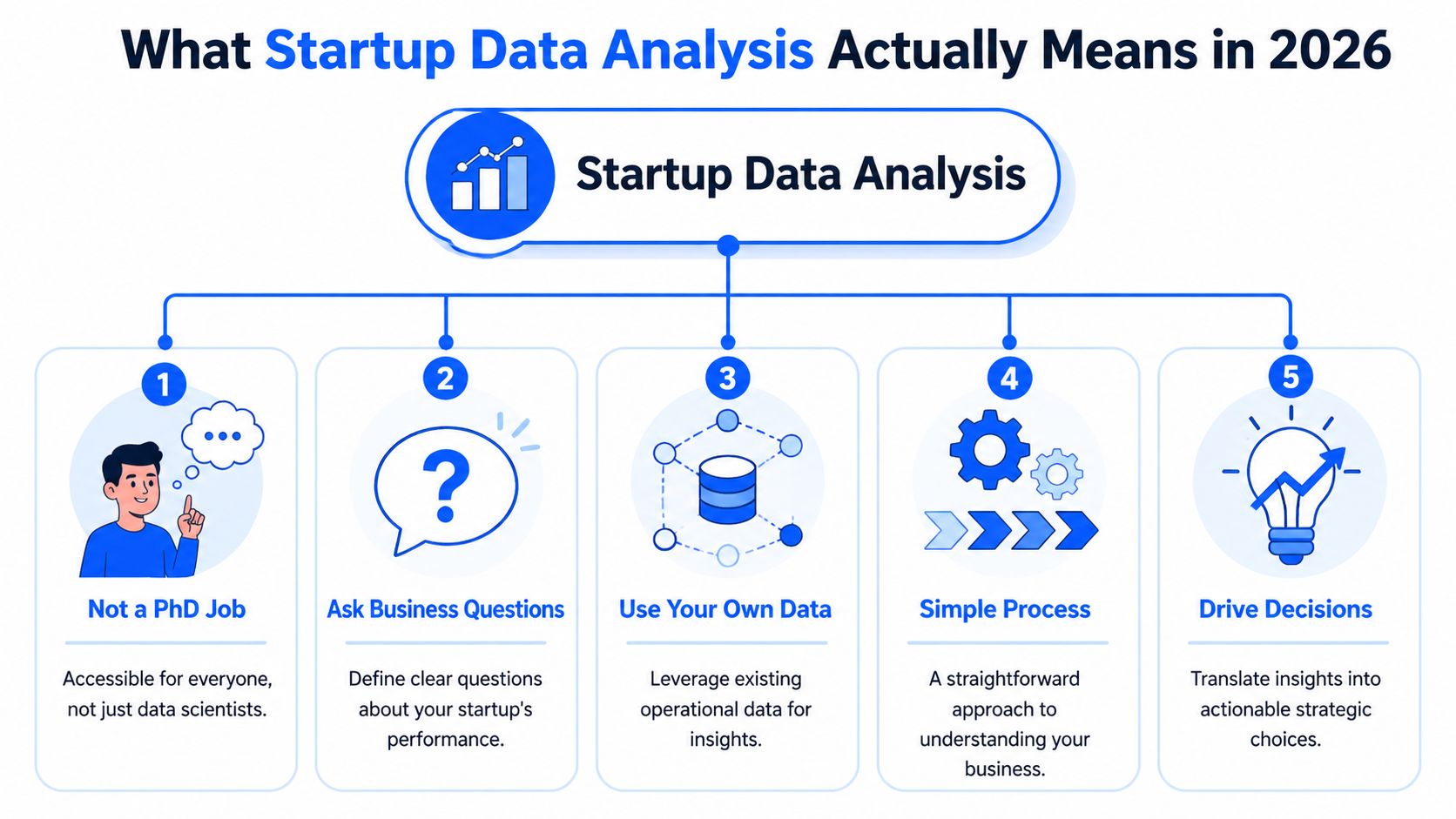

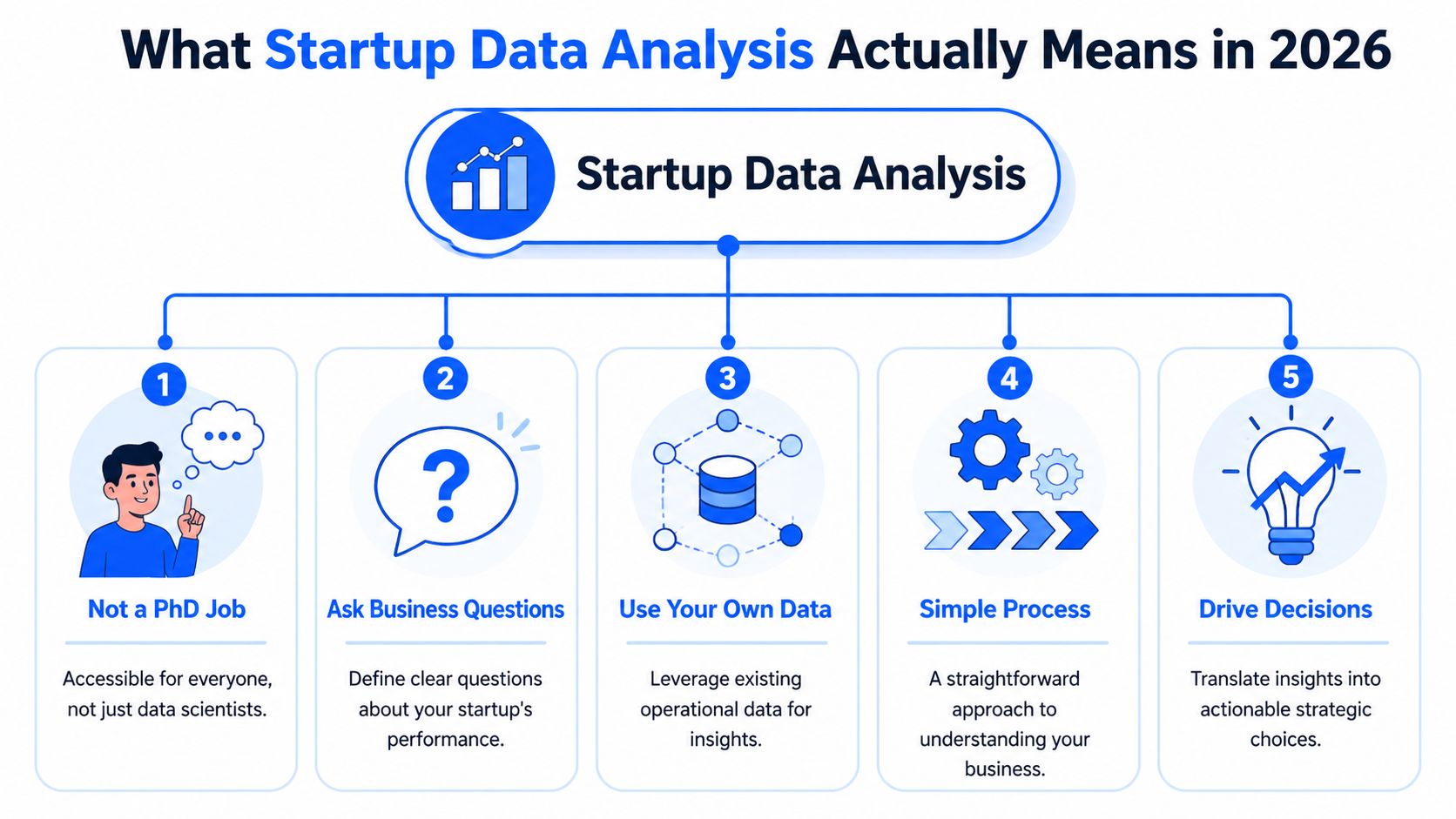

What Startup Data Analysis Actually Means in 2026

“Startup data analysis” sounds more technical than it is. Strip away the jargon and it comes down to this: ask a business question, use your own data, get an answer you can act on.

That matters because the volume problem is getting worse, not better. Americans started 5.5 million businesses in 2023, and 2.5 quintillion bytes of data are generated daily. Startups using AI-powered analytics see productivity gains of up to 63% according to High5’s startup statistics roundup. Founders aren’t short on data. They’re short on time and clear interpretation.

It’s a conversation, not a reporting project

The old setup forced everyone to speak through a translator. You had to know SQL, understand schemas, and remember which table held the “real” subscription status. A human analyst sat in the middle and converted business questions into database logic.

That still works. It’s just slow.

A Conversational AI Data Analyst changes the interaction model. You ask, “Show me trial-to-paid conversion by signup month.” The system handles the translation, pulls from the connected sources, and returns the result as a chart, table, or summary. That’s Conversational Analytics in plain terms.

The useful definition of startup data analysis

If you’re a founder, PM, or growth lead, startup data analysis isn’t “doing BI.” It’s asking things like:

Acquisition questions such as which channels bring users who complete a key action

Product questions such as where activation stalls after signup

Revenue questions such as which customer segments expand fastest

Operations questions such as whether support load spikes after a release

None of those require you to become a data engineer. They require access, context, and a way to ask clearly.

Startup teams rarely need more dashboards first. They need fewer obstacles between curiosity and evidence.

What Automated BI looks like in real life

Good Automated BI doesn’t dump another dashboard folder on your team. It does three things well:

Connects scattered sources

Your product usage might live in Postgres. Leads sit in HubSpot. Orders come through Shopify. Tickets live somewhere else. Analysis only gets useful when those systems can be queried together.Translates plain English into analysis

This is the part non-technical teams care about most. Not because SQL is evil, but because writing and debugging queries is the wrong use of time for most operating teams.Returns answers in a usable format

A result should come back as something people can immediately discuss. A cohort chart. A funnel. A trend line. A short explanation.

Try asking your analytics tool: “Show me weekly active users who completed our core action over the last 12 weeks.”

Then ask: “Break that down by signup channel.”

That’s a proper startup workflow. One question leads to the next. You follow the signal instead of waiting for someone to package it for you.

What doesn’t count

A few things get mistaken for startup data analysis all the time:

Dashboard hoarding where every team gets twenty charts and no one knows which one matters

Spreadsheet relays where people re-export the same numbers every Monday

Metric tourism where leaders peek at graphs but don’t tie them to decisions

Tool collecting where buying software substitutes for deciding what questions matter

Real analysis reduces uncertainty around a decision. If it doesn’t help someone choose, prioritize, cut, or double down, it’s decoration.

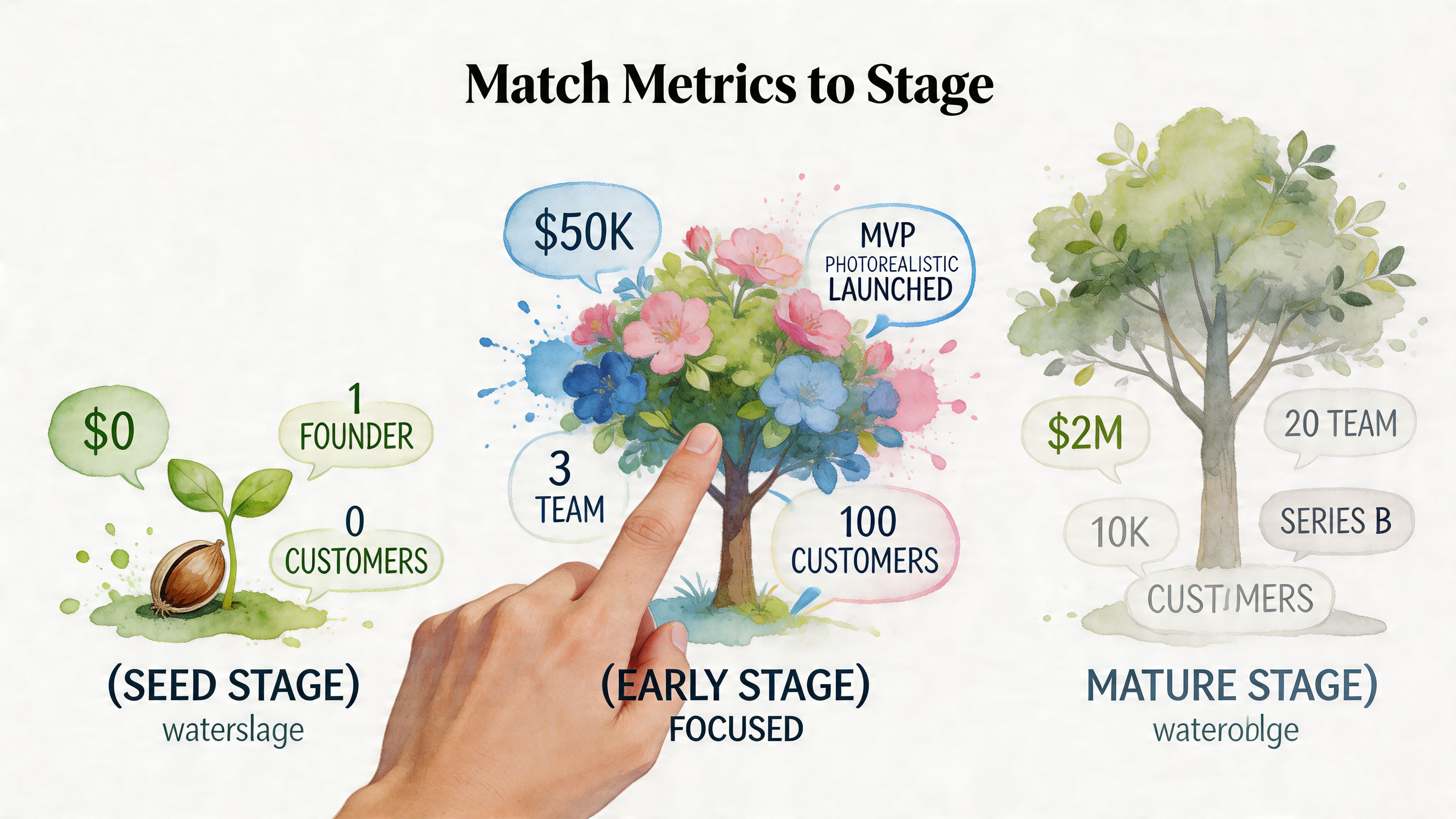

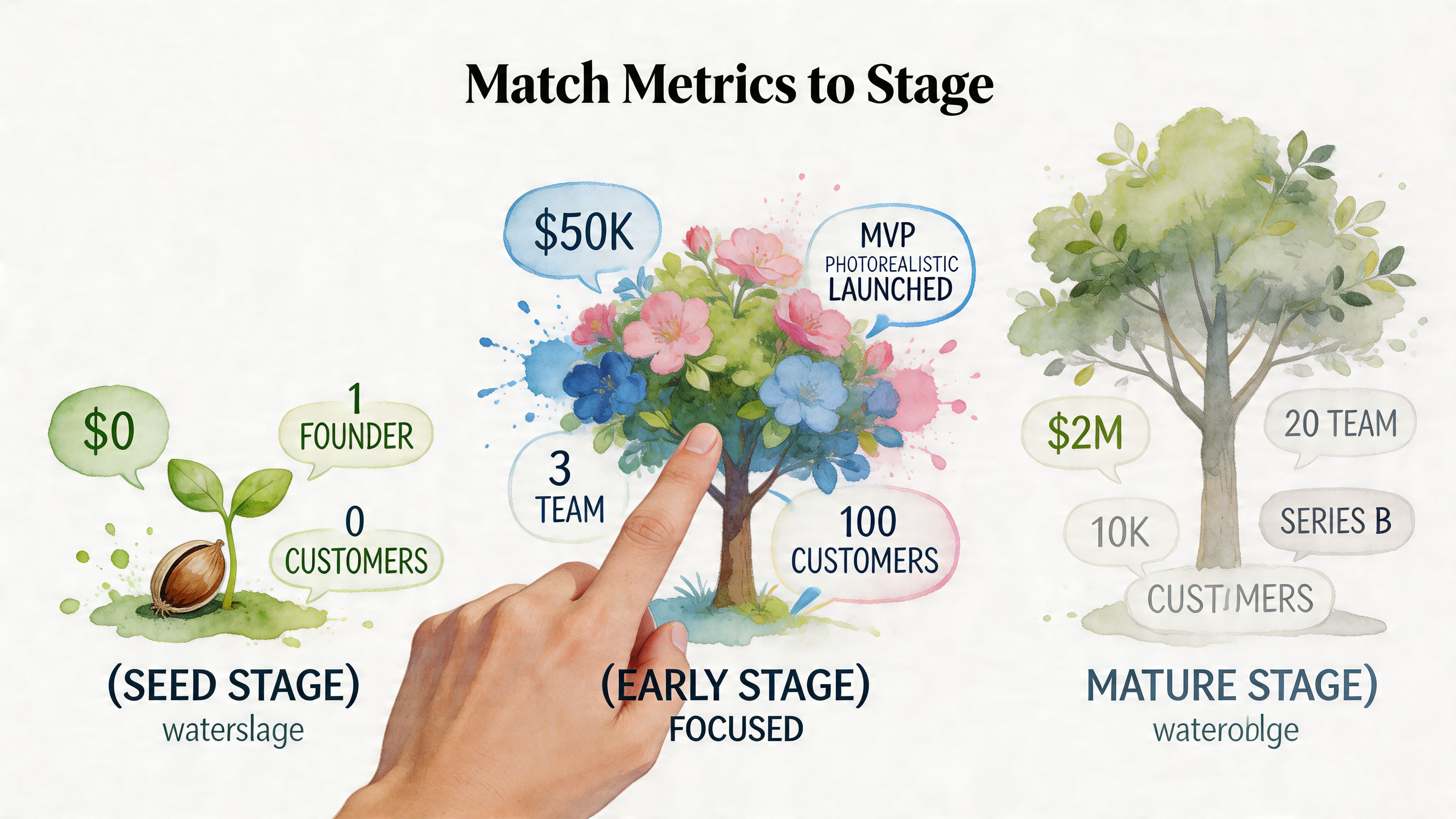

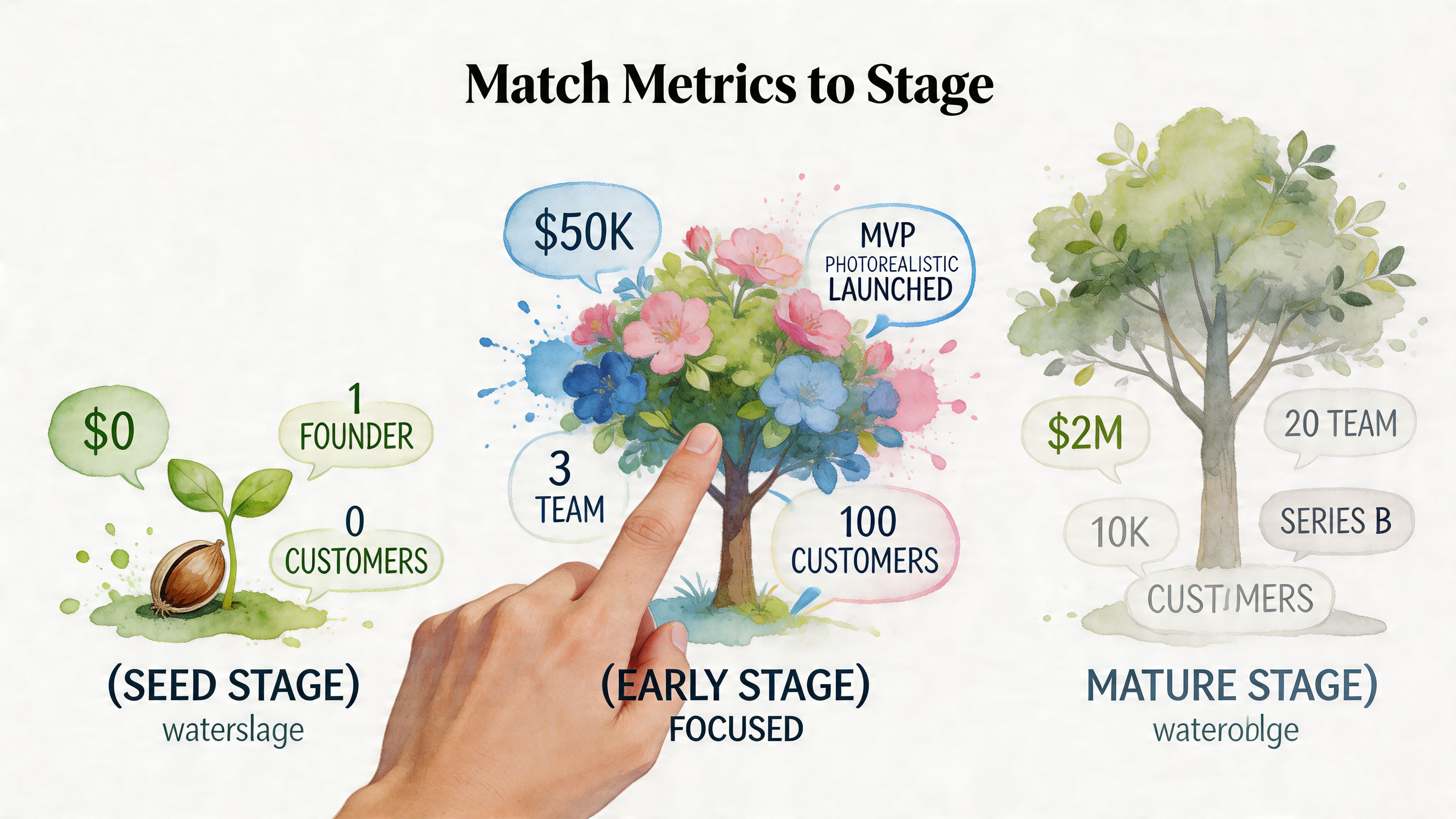

Match Your Metrics to Your Startup Stage

Most startup metrics advice is written as if every company should track the same things from day one. That’s how teams end up obsessing over polished board metrics before they’ve proven anyone cares.

The useful way to approach startup data analysis is by stage. Early on, you’re looking for evidence of need and usage. Later, you care more about efficiency, repeatability, and scaling what already works.

Pre-seed and seed

At this stage, the dangerous mistake is tracking polished metrics that assume a stable business model. You probably don’t have one yet.

The right questions are messier:

Are new users reaching the first moment of value?

Do they come back without being chased?

Which user behaviors show genuine pull, not polite curiosity?

What are people complaining about in support, onboarding calls, or feedback forms?

You’re looking for engagement signals and product-market fit clues, not elegant financial storytelling.

A founder at this stage should care more about patterns like “users who import data in the first session return next week” than broad totals like signups. Total signups are easy to inflate. Meaningful usage is harder to fake.

Try asking your analytics tool:

“Show me the percentage of new users who completed our activation event within 7 days.”

“Chart retention by signup week.”

“List the most common themes in support tickets from new users.”

That last one matters more than many teams admit. Support tickets, review text, and survey answers often reveal unmet needs and competitive gaps long before a dashboard does.

Early stage after initial traction

Once usage looks real and revenue starts to matter consistently, your questions change. You’re no longer asking only “do people want this?” You’re asking, “can we grow this without lighting cash on fire?”

Startup data analysis requires connecting product, marketing, and revenue systems. You want to see whether the users you acquire are becoming the customers you hoped for.

The metric categories get sharper:

Acquisition efficiency

Which channels bring users who activate, retain, and convert, not just click?Retention and cohort behavior

Are newer cohorts healthier than older ones? If not, your “growth” may just be a bigger top of funnel hiding a leaky product.Sales and funnel movement

Where do opportunities stall? Do certain segments close faster? Does deal quality differ by source?Cash discipline

You don’t need a wall-sized finance dashboard. You do need a clear view of what’s moving revenue and what’s draining attention.

A startup with traction can still die from blurry feedback loops. Growth hides mistakes for a while. Then the bill arrives.

Useful prompts here look like:

“Show conversion from lead to customer by acquisition source over time.”

“Create a cohort chart of retained users by signup month.”

“Compare churn patterns between customers acquired through paid channels and referrals.”

“Show product usage in the 30 days before cancellation.”

Those questions are better than asking for “the KPI dashboard.” They force the analysis toward choices.

Growth stage

By the time a startup has multiple teams, more channels, and a real operating rhythm, the problem changes again. It’s less about whether data exists and more about whether everyone means the same thing when they say “customer,” “active,” “churn,” or “revenue.”

At this point, the strongest metric set is usually small and cross-functional. A giant dashboard library creates a false sense of control. Teams need a handful of trusted indicators with clear owners.

Three habits matter:

Choose metrics tied to decisions

If no one changes behavior when the number moves, it probably shouldn’t sit in your core set.Keep one stage-specific scorecard

Not a dashboard graveyard. A compact operating view.Use prompts that compare, segment, and explain

“What changed?” is more useful than “what happened?”

Try prompts like:

“Show month-over-month expansion revenue by customer segment.”

“Which product actions correlate with renewal?”

“Compare support volume and churn after the last three product releases.”

The stage mismatch that wastes the most time

I see the same failure pattern constantly. A seed company copies a mature SaaS metric stack. They debate definitions, build dashboards, and monitor numbers that don’t guide a current decision.

Meanwhile, key questions sit unanswered:

Why do users drop after signup?

Which customer type gets value fastest?

What objection appears over and over in calls?

Which feature gets used once and never again?

Startup data analysis works when the questions are narrow enough to matter right now. If your team can’t name the next decision a metric should inform, don’t build the metric yet.

For more technical teams that still need SQL examples alongside self-serve analysis, an internal guide like The Guide to SQL Charting can help bridge that gap without turning every question into a custom reporting project.

Getting Your Data House in Order for Analysis

You can’t answer cross-functional questions if every system lives on its own little island. Product has one dataset. Marketing has another. Sales trusts the CRM. Finance trusts exports. Everyone claims they’re “data-driven,” and then the meeting turns into a debate about whose spreadsheet is least wrong.

That’s why the first job in startup data analysis is boring but unavoidable. Get the data into one place people can use.

Why ELT replaced old-school ETL

If you’ve heard both terms and mentally filed them under “annoying data plumbing,” fair enough. The practical difference is simple.

With ETL, teams try to clean and transform data before loading it into the warehouse. That sounds tidy. In practice, it often slows everything down.

With ELT, you extract the data, load it first, and transform it after. That approach fits startup speed much better. The ELT pipeline has become the standard for agile startups and can reduce data latency by 70% to 90% compared with older ETL methods, according to Weld’s guide to startup data analytics.

That matters because your questions don’t stay in one department. Once Shopify, HubSpot, Postgres, and other tools land in a central warehouse, you can ask things the old setup made painful:

Did customer support volume change after a release?

Which campaign source produces users who retain?

What happens to expansion revenue after a drop in product usage?

What a sane startup stack looks like

You do not need a miniature enterprise architecture diagram taped to the wall.

A workable setup usually has these pieces:

Operational sources like Postgres, HubSpot, Shopify, Stripe, or Linear

A warehouse where the raw data lands

A light modeling layer so core fields and business definitions make sense

An analytics interface that non-technical people can use without filing tickets

For some teams, outside help is useful during setup. If you need guidance on architecture or governance, reviews of data science consulting firms can help you compare the kinds of partners that support early-stage analytics builds.

The trap is overbuilding. Founders hear “single source of truth” and assume they need to model every object, event, and edge case before anyone can ask a question. They don’t. Start with the handful of systems that define customer acquisition, product usage, and revenue.

Centralization is not about collecting everything. It’s about connecting the few sources that answer your most expensive questions.

Instrument only what you’ll use

Tracking plans get bloated fast. Someone suggests logging every click, every hover, every page interaction, every background task. Then no one maintains the definitions, and six months later the event stream becomes landfill.

Track the actions that map to your business model. If you’re a SaaS product, that usually means a few core moments:

Signup and activation events

Key product actions tied to value

Subscription or purchase events

Cancellation or downgrade signals

Support and feedback touchpoints

When the raw data is messy, clean the highest-value fields first. Customer IDs, timestamps, event names, and revenue logic beat cosmetic cleanup every time. If your team is sorting through duplicate fields, missing labels, and inconsistent naming, this guide on how to clean up data is a practical place to start.

One connected question beats ten isolated dashboards

Founders often ask for a dashboard by department because that’s how the org chart looks. The better path is to center analysis on business questions.

For example:

“Which acquisition sources lead to the highest activation and lowest early churn?”

“Do accounts with more support tickets expand less?”

“Did release timing affect retention for new cohorts?”

Those answers require connected data, not prettier chart colors.

This is also the section where a tool can earn its keep. A platform such as Statspresso connects sources like Shopify, HubSpot, Linear, and Postgres so teams can ask plain-English questions and get charts back without writing SQL. That’s useful when the goal is self-serve analysis, not another reporting backlog.

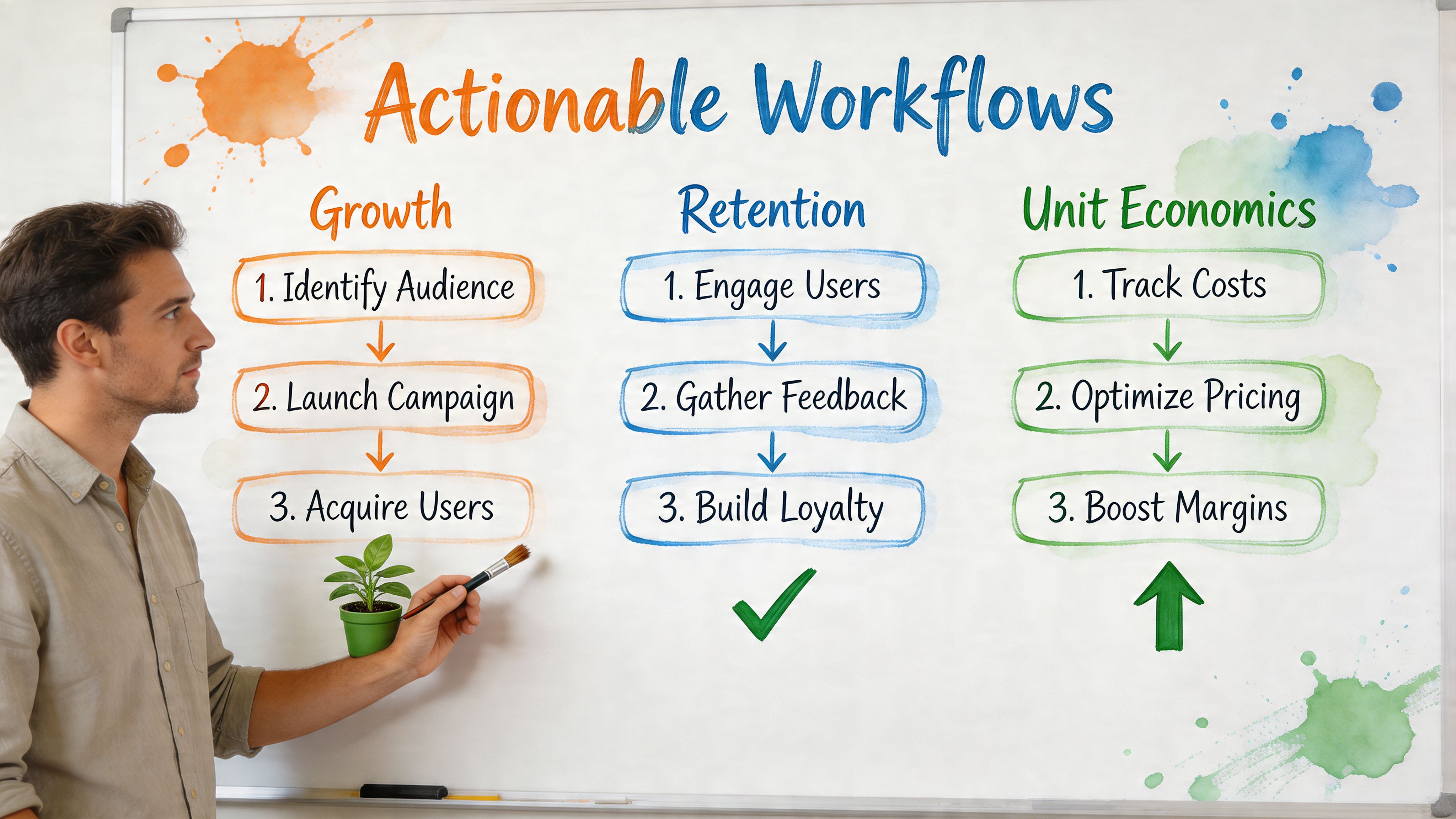

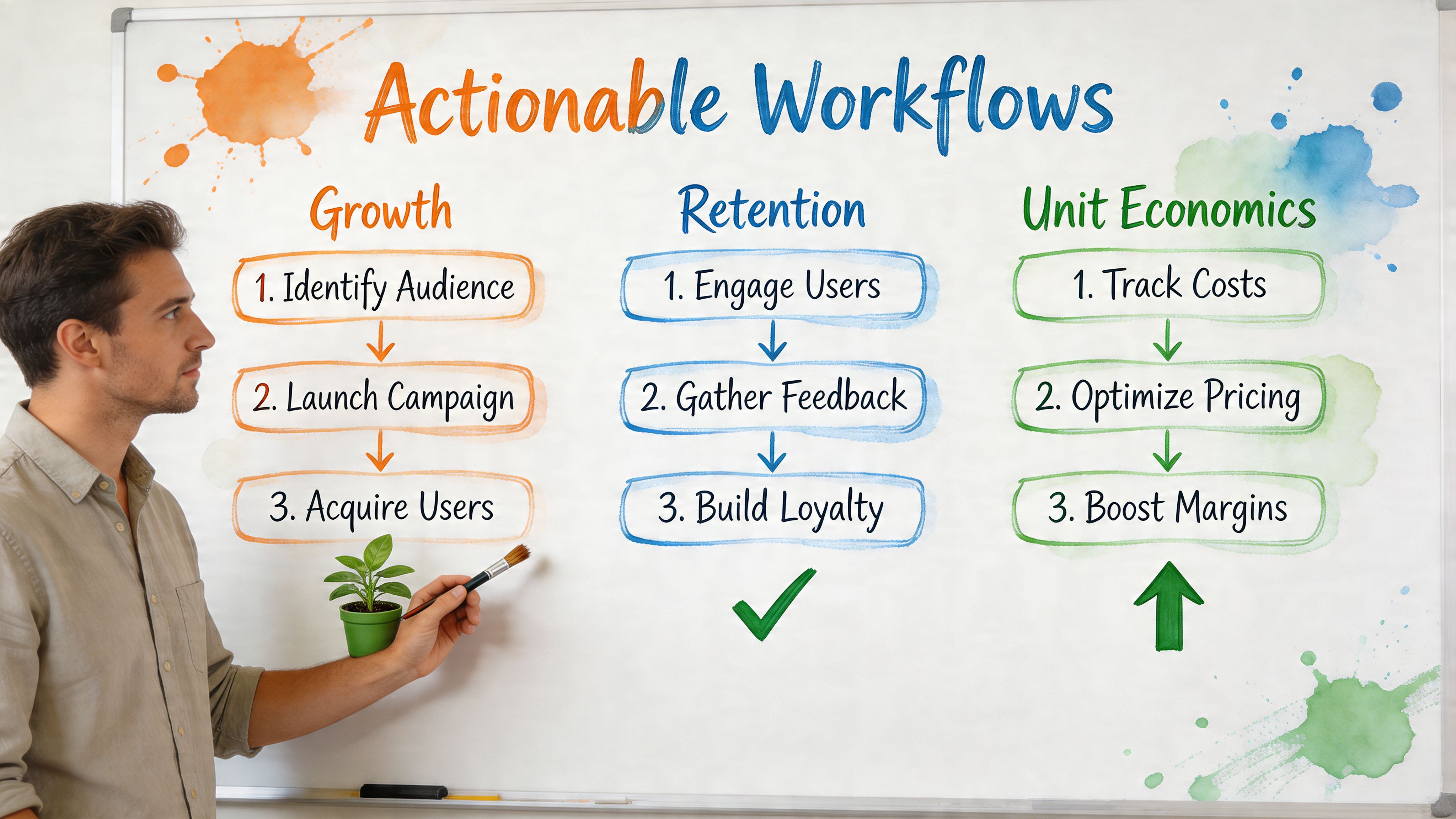

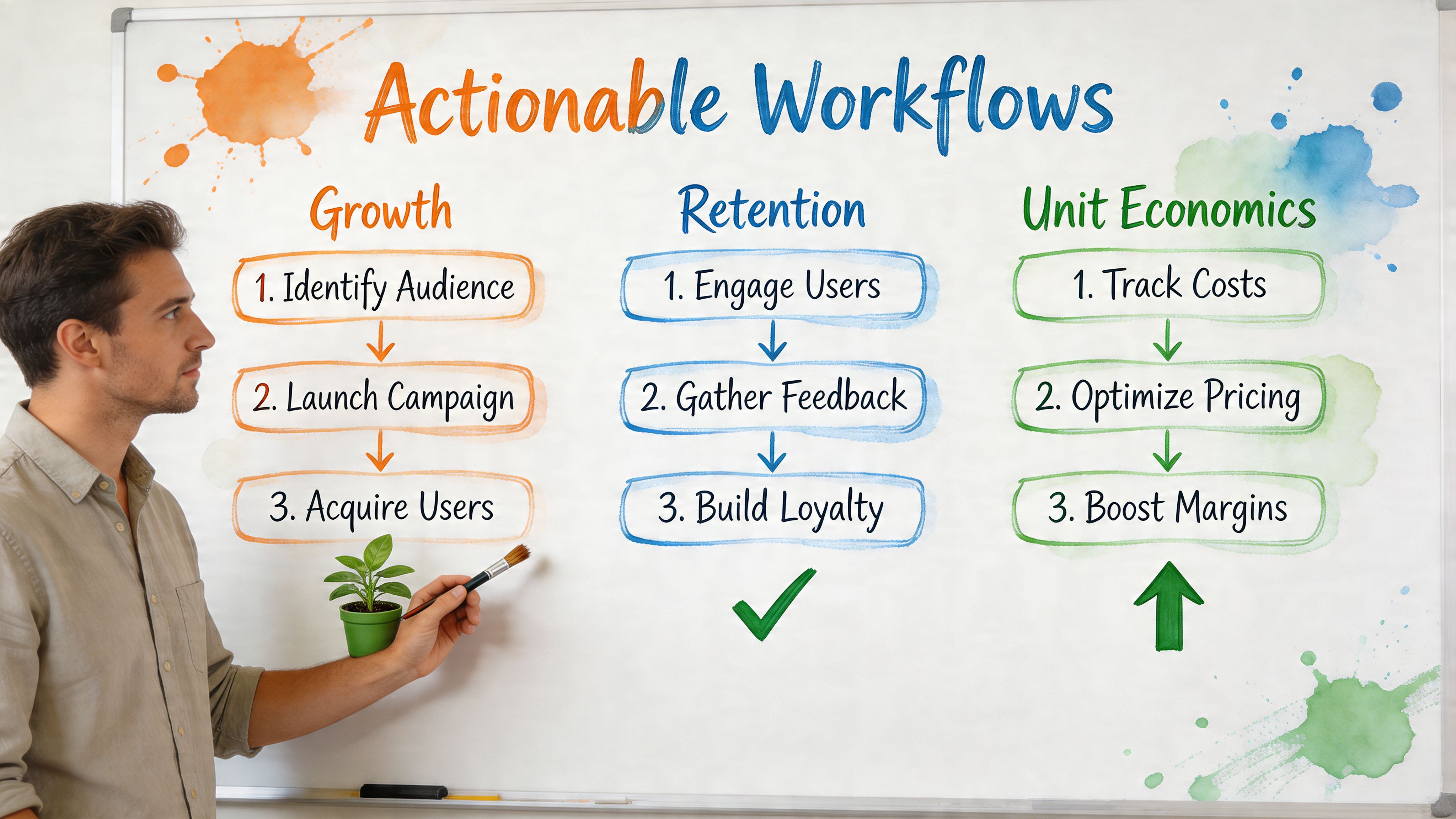

Analysis Workflows You Can Use Today

The theory is straightforward. The daily work often presents challenges for organizations. Someone needs an answer. Nobody wants to open five tools, remember table joins, and spend the afternoon explaining why the metric definition changed.

The fix is to standardize a few core workflows that your startup will use constantly. For startups, these often fall into three buckets: growth, retention, and unit economics.

The old way and the new way of getting answers

Task | Old Way (Manual SQL & BI Tools) | New Way (Statspresso) |

|---|---|---|

Check monthly revenue trend | Ask analyst for a report, wait for query and chart build | Ask in plain English and get a chart in seconds |

Compare channel quality | Pull data from ad platform, CRM, and product tables, then reconcile manually | Ask for activation or conversion by source across connected data |

Review retention by cohort | Build or reuse cohort SQL, validate date logic, then export to BI | Request a cohort chart directly |

Investigate churn risk | Join support, billing, and product activity tables manually | Ask what behaviors changed before cancellation |

Share findings with team | Screenshot dashboard or send spreadsheet export | Share the generated chart and explanation |

Growth analysis

Growth analysis should answer one basic question: which inputs create useful customers, not just more traffic?

A lot of teams stop at top-of-funnel numbers because they’re easy to access. Clicks, signups, and sessions arrive quickly. The harder part is tracing quality through activation, conversion, and retention.

Useful growth workflow:

Start with source performance

Look at acquisition sources side by side, but don’t stop at volume.Add product qualification

Ask which sources produce users who complete the core action.Then check revenue outcome

Which channels lead to customers who stick or expand?

Try these prompts:

“Show signups, activation, and paid conversion by acquisition source for the last 90 days.”

“Which traffic sources bring users who complete our key product action within 7 days?”

“Compare revenue by first-touch source and last-touch source.”

AI-led analysis proves more useful than static reporting. Startups using AI-driven predictive analytics reduce market missteps by 30% to 50%, and AI models can process multivariate time-series data 100x faster than manual SQL queries, according to HubSpot’s discussion of AI data analysis for startups. In practice, that means the system can inspect patterns across behavior, timing, and conversion signals much faster than a person stitching queries together by hand.

Don’t ask, “Which channel has the most leads?” Ask, “Which channel produces users who become healthy customers?”

Retention analysis

Retention is where startup data analysis usually gets honest. Acquisition can flatter you for months. Retention tells you whether the product is worth returning to.

The most effective retention workflow is cohort-first. Group users by signup period, then compare how their activity changes over time. If later cohorts are weaker, something in onboarding, targeting, or product experience probably slipped.

Strong retention questions include:

What do retained users do early that churned users don’t?

Did product changes improve repeat behavior or just shift first-week activity?

Are there support or onboarding signals that appear before drop-off?

Copy-paste prompts:

“Show me a cohort chart of user retention by signup month.”

“Compare first-week behaviors between retained users and churned users.”

“Show support ticket categories for accounts that canceled within 60 days.”

“Which features are most used by customers who renew?”

Unstructured data matters here. Support tickets, NPS comments, community posts, and app reviews often show the reason before the metric shows the symptom. If multiple customers complain about the same limitation while still using the product heavily, that’s often a competitive gap worth quantifying.

A practical workflow is:

Pull cancellation, downgrade, or inactive-user segments.

Layer in ticket themes and feedback text.

Compare those themes against healthy accounts.

Prioritize fixes where complaint frequency and account value overlap.

That’s not glamorous BI. It’s how teams figure out what’s driving churn.

Unit economics analysis

Plenty of startup teams postpone unit economics because it sounds like finance homework. That’s a mistake. You don’t need a giant model. You need clarity on whether growth is getting more efficient or less.

Three lenses usually matter:

Acquisition cost versus downstream value

Time from acquisition to revenue

Segment differences in payback and churn risk

Useful prompts:

“Compare customer acquisition cost trends with conversion and churn by month.”

“Show average revenue per customer by acquisition source.”

“Which customer segments have the highest churn after discounting?”

“Break down expansion, contraction, and churn by account segment.”

When teams do this manually, the pain is predictable. Finance has one export. Marketing has another. Product data lives elsewhere. Dates don’t line up. Definitions drift. By the time the model is ready, nobody trusts it.

A workflow for idea validation and competitive gaps

There’s one workflow I wish more startups used early: analyzing customer complaints and feedback to spot underserved needs. Founders love “market research,” but many skip the richest source sitting inside their own operations.

Ask questions like:

“Summarize the most frequent complaint themes from support tickets in the last quarter.”

“Compare high-usage customers with low satisfaction feedback.”

“Which feature requests appear most often among retained users?”

“Group negative review text by theme and account type.”

That sort of analysis does two useful things. It helps you prioritize product work, and it shows where the market is still under-served. Startups often chase feature parity while customer text is practically begging them to fix a more painful workflow.

Common Data Pitfalls and How to Sidestep Them

Most startup data problems aren’t caused by missing tools. They come from bad habits with nice charts wrapped around them.

Vanity metrics

Total signups, raw pageviews, total followers. They look good in a slide. They rarely tell you what to do next.

The antidote is simple. Tie every metric to a meaningful behavior or business outcome. Instead of “how many users signed up,” ask “how many users signed up and completed the action that predicts value?”

A useful check is this: if the number doubles tomorrow, would you celebrate without asking a follow-up question? If yes, it might be vanity.

Analysis paralysis

Some teams stall because they want perfect tracking, perfect definitions, and perfect confidence before acting. Startups don’t get that luxury.

Use a decision threshold instead. Ask, “Do we have enough signal to choose the next experiment, cut the weak channel, or prioritize the fix?” If yes, move.

Good startup analysis narrows uncertainty enough to act. It doesn’t eliminate uncertainty.

Confirmation bias

Founders are especially vulnerable here because conviction is part of the job. The danger starts when you only ask questions that can confirm your favorite theory.

Counter it by forcing one disconfirming question into the workflow. If you think a feature improves retention, ask what happened to the users who adopted it late, or whether the same pattern appears in segments you didn’t expect.

Inconsistent definitions

Marketing says a customer is a lead who booked a demo. Product says it’s someone who activated. Finance says it’s a paying account. None of them are wrong. They’re just answering different questions.

Fix this by naming the business object precisely. Paying customer. Activated workspace. Closed-won account. Trial user. Clean labels save hours of fake disagreement.

False confidence from tiny samples

Not every change means something real. That’s where a basic grasp of experiment design matters. If your team is running product or landing page tests, a practical explainer on testing statistical significance is worth sharing internally so people stop declaring victory after one noisy result.

Dashboard sprawl

The final trap is building too many dashboards because nobody wants to decide which few matter. That creates a scavenger hunt, not clarity.

A cleaner approach:

Keep one operating scorecard with the small set of metrics your team uses weekly

Archive dead dashboards instead of pretending they’re still relevant

Default to questions first when someone asks for a new chart

Require an owner for any core metric

If nobody owns the number, nobody will notice when it breaks.

Your Action Plan From Data to Decision

You don’t need a crash course in SQL. You need a simpler operating habit. Ask better questions, connect the right sources, and shorten the time between confusion and action.

TL;DR

Start with the core bottleneck If your team waits days or weeks for basic answers, the problem isn’t curiosity. It’s access.

Treat startup data analysis as question-driven

Good analysis starts with prompts like “why did retention drop?” or “which channels bring users who activate?” Not “which dashboard should we build?”Match metrics to your stage

Early teams need validation and engagement signals. Later teams need efficiency, retention depth, and clearer operating metrics.Centralize only the data that matters now

Connect the systems that define acquisition, product usage, revenue, and support. Don’t boil the ocean.Use repeatable workflows

Growth, retention, and unit economics cover most of the decisions startups make every week.Watch for self-inflicted mistakes

Vanity metrics, definition drift, analysis paralysis, and dashboard clutter waste more time than missing software.Use conversational analytics where it removes friction

Skip the SQL. Just ask your data a question and get a chart in seconds.Start smaller than you think

One source. One question. One useful chart. That’s enough to change how a team works.

Try asking your data right now: “Show me my revenue by month for the last year as a bar chart.”

Then ask a second question based on what you see. That’s the whole game. Faster learning beats prettier reporting.

Connect your first data source in Statspresso and ask your first question in plain English. If your startup already has the data but not the time to wrangle SQL, that’s the fastest path from scattered numbers to a chart your team can use.

You’ve got questions piling up right now. Which channel is bringing customers who stick? Where is churn starting? Why did conversion dip after the last release? The answers exist, but they’re trapped in Postgres, HubSpot, Shopify, Stripe, spreadsheets, and one overworked analyst’s queue.

That bottleneck isn’t normal. It’s just old. Waiting two weeks for a dashboard that’s already stale by the time it lands is a relic. Startup data analysis should mean fast answers to urgent business questions, not tickets, exports, and SQL archaeology.

Stop Waiting for Data and Start Making Decisions

Most founders treat slow analytics like bad weather. Annoying, but unavoidable. It isn’t.

The cost of delay is brutal in startups because the margin for error is tiny. About 90% of startups fail overall, and the leading causes map directly to missing or late analysis: 42% fail because there’s no market need, and 29% run out of funding according to Exploding Topics startup statistics. If you learn too slowly, you validate the wrong thing, spend on the wrong thing, or keep a weak motion alive for too long.

That’s why I get impatient with the old playbook. A founder asks for a retention breakdown. Someone exports CSVs. Someone else writes SQL. A chart appears after three rounds of clarification. By then, the product team has already shipped something else and marketing has changed the campaign setup.

Practical rule: If your answer arrives after the decision window closed, you don’t have analytics. You have reporting.

The better model is simple. Ask a business question in plain English. Let the system translate that into the query, pull the data, and return a chart or number immediately. That is startup data analysis in practice. Not a museum exhibit of dashboards no one trusts.

A lot of teams still assume self-serve analytics means teaching everyone SQL or buying a bigger BI tool. It usually means the opposite. Fewer layers. Fewer handoffs. Less ceremony between question and answer.

What Startup Data Analysis Actually Means in 2026

“Startup data analysis” sounds more technical than it is. Strip away the jargon and it comes down to this: ask a business question, use your own data, get an answer you can act on.

That matters because the volume problem is getting worse, not better. Americans started 5.5 million businesses in 2023, and 2.5 quintillion bytes of data are generated daily. Startups using AI-powered analytics see productivity gains of up to 63% according to High5’s startup statistics roundup. Founders aren’t short on data. They’re short on time and clear interpretation.

It’s a conversation, not a reporting project

The old setup forced everyone to speak through a translator. You had to know SQL, understand schemas, and remember which table held the “real” subscription status. A human analyst sat in the middle and converted business questions into database logic.

That still works. It’s just slow.

A Conversational AI Data Analyst changes the interaction model. You ask, “Show me trial-to-paid conversion by signup month.” The system handles the translation, pulls from the connected sources, and returns the result as a chart, table, or summary. That’s Conversational Analytics in plain terms.

The useful definition of startup data analysis

If you’re a founder, PM, or growth lead, startup data analysis isn’t “doing BI.” It’s asking things like:

Acquisition questions such as which channels bring users who complete a key action

Product questions such as where activation stalls after signup

Revenue questions such as which customer segments expand fastest

Operations questions such as whether support load spikes after a release

None of those require you to become a data engineer. They require access, context, and a way to ask clearly.

Startup teams rarely need more dashboards first. They need fewer obstacles between curiosity and evidence.

What Automated BI looks like in real life

Good Automated BI doesn’t dump another dashboard folder on your team. It does three things well:

Connects scattered sources

Your product usage might live in Postgres. Leads sit in HubSpot. Orders come through Shopify. Tickets live somewhere else. Analysis only gets useful when those systems can be queried together.Translates plain English into analysis

This is the part non-technical teams care about most. Not because SQL is evil, but because writing and debugging queries is the wrong use of time for most operating teams.Returns answers in a usable format

A result should come back as something people can immediately discuss. A cohort chart. A funnel. A trend line. A short explanation.

Try asking your analytics tool: “Show me weekly active users who completed our core action over the last 12 weeks.”

Then ask: “Break that down by signup channel.”

That’s a proper startup workflow. One question leads to the next. You follow the signal instead of waiting for someone to package it for you.

What doesn’t count

A few things get mistaken for startup data analysis all the time:

Dashboard hoarding where every team gets twenty charts and no one knows which one matters

Spreadsheet relays where people re-export the same numbers every Monday

Metric tourism where leaders peek at graphs but don’t tie them to decisions

Tool collecting where buying software substitutes for deciding what questions matter

Real analysis reduces uncertainty around a decision. If it doesn’t help someone choose, prioritize, cut, or double down, it’s decoration.

Match Your Metrics to Your Startup Stage

Most startup metrics advice is written as if every company should track the same things from day one. That’s how teams end up obsessing over polished board metrics before they’ve proven anyone cares.

The useful way to approach startup data analysis is by stage. Early on, you’re looking for evidence of need and usage. Later, you care more about efficiency, repeatability, and scaling what already works.

Pre-seed and seed

At this stage, the dangerous mistake is tracking polished metrics that assume a stable business model. You probably don’t have one yet.

The right questions are messier:

Are new users reaching the first moment of value?

Do they come back without being chased?

Which user behaviors show genuine pull, not polite curiosity?

What are people complaining about in support, onboarding calls, or feedback forms?

You’re looking for engagement signals and product-market fit clues, not elegant financial storytelling.

A founder at this stage should care more about patterns like “users who import data in the first session return next week” than broad totals like signups. Total signups are easy to inflate. Meaningful usage is harder to fake.

Try asking your analytics tool:

“Show me the percentage of new users who completed our activation event within 7 days.”

“Chart retention by signup week.”

“List the most common themes in support tickets from new users.”

That last one matters more than many teams admit. Support tickets, review text, and survey answers often reveal unmet needs and competitive gaps long before a dashboard does.

Early stage after initial traction

Once usage looks real and revenue starts to matter consistently, your questions change. You’re no longer asking only “do people want this?” You’re asking, “can we grow this without lighting cash on fire?”

Startup data analysis requires connecting product, marketing, and revenue systems. You want to see whether the users you acquire are becoming the customers you hoped for.

The metric categories get sharper:

Acquisition efficiency

Which channels bring users who activate, retain, and convert, not just click?Retention and cohort behavior

Are newer cohorts healthier than older ones? If not, your “growth” may just be a bigger top of funnel hiding a leaky product.Sales and funnel movement

Where do opportunities stall? Do certain segments close faster? Does deal quality differ by source?Cash discipline

You don’t need a wall-sized finance dashboard. You do need a clear view of what’s moving revenue and what’s draining attention.

A startup with traction can still die from blurry feedback loops. Growth hides mistakes for a while. Then the bill arrives.

Useful prompts here look like:

“Show conversion from lead to customer by acquisition source over time.”

“Create a cohort chart of retained users by signup month.”

“Compare churn patterns between customers acquired through paid channels and referrals.”

“Show product usage in the 30 days before cancellation.”

Those questions are better than asking for “the KPI dashboard.” They force the analysis toward choices.

Growth stage

By the time a startup has multiple teams, more channels, and a real operating rhythm, the problem changes again. It’s less about whether data exists and more about whether everyone means the same thing when they say “customer,” “active,” “churn,” or “revenue.”

At this point, the strongest metric set is usually small and cross-functional. A giant dashboard library creates a false sense of control. Teams need a handful of trusted indicators with clear owners.

Three habits matter:

Choose metrics tied to decisions

If no one changes behavior when the number moves, it probably shouldn’t sit in your core set.Keep one stage-specific scorecard

Not a dashboard graveyard. A compact operating view.Use prompts that compare, segment, and explain

“What changed?” is more useful than “what happened?”

Try prompts like:

“Show month-over-month expansion revenue by customer segment.”

“Which product actions correlate with renewal?”

“Compare support volume and churn after the last three product releases.”

The stage mismatch that wastes the most time

I see the same failure pattern constantly. A seed company copies a mature SaaS metric stack. They debate definitions, build dashboards, and monitor numbers that don’t guide a current decision.

Meanwhile, key questions sit unanswered:

Why do users drop after signup?

Which customer type gets value fastest?

What objection appears over and over in calls?

Which feature gets used once and never again?

Startup data analysis works when the questions are narrow enough to matter right now. If your team can’t name the next decision a metric should inform, don’t build the metric yet.

For more technical teams that still need SQL examples alongside self-serve analysis, an internal guide like The Guide to SQL Charting can help bridge that gap without turning every question into a custom reporting project.

Getting Your Data House in Order for Analysis

You can’t answer cross-functional questions if every system lives on its own little island. Product has one dataset. Marketing has another. Sales trusts the CRM. Finance trusts exports. Everyone claims they’re “data-driven,” and then the meeting turns into a debate about whose spreadsheet is least wrong.

That’s why the first job in startup data analysis is boring but unavoidable. Get the data into one place people can use.

Why ELT replaced old-school ETL

If you’ve heard both terms and mentally filed them under “annoying data plumbing,” fair enough. The practical difference is simple.

With ETL, teams try to clean and transform data before loading it into the warehouse. That sounds tidy. In practice, it often slows everything down.

With ELT, you extract the data, load it first, and transform it after. That approach fits startup speed much better. The ELT pipeline has become the standard for agile startups and can reduce data latency by 70% to 90% compared with older ETL methods, according to Weld’s guide to startup data analytics.

That matters because your questions don’t stay in one department. Once Shopify, HubSpot, Postgres, and other tools land in a central warehouse, you can ask things the old setup made painful:

Did customer support volume change after a release?

Which campaign source produces users who retain?

What happens to expansion revenue after a drop in product usage?

What a sane startup stack looks like

You do not need a miniature enterprise architecture diagram taped to the wall.

A workable setup usually has these pieces:

Operational sources like Postgres, HubSpot, Shopify, Stripe, or Linear

A warehouse where the raw data lands

A light modeling layer so core fields and business definitions make sense

An analytics interface that non-technical people can use without filing tickets

For some teams, outside help is useful during setup. If you need guidance on architecture or governance, reviews of data science consulting firms can help you compare the kinds of partners that support early-stage analytics builds.

The trap is overbuilding. Founders hear “single source of truth” and assume they need to model every object, event, and edge case before anyone can ask a question. They don’t. Start with the handful of systems that define customer acquisition, product usage, and revenue.

Centralization is not about collecting everything. It’s about connecting the few sources that answer your most expensive questions.

Instrument only what you’ll use

Tracking plans get bloated fast. Someone suggests logging every click, every hover, every page interaction, every background task. Then no one maintains the definitions, and six months later the event stream becomes landfill.

Track the actions that map to your business model. If you’re a SaaS product, that usually means a few core moments:

Signup and activation events

Key product actions tied to value

Subscription or purchase events

Cancellation or downgrade signals

Support and feedback touchpoints

When the raw data is messy, clean the highest-value fields first. Customer IDs, timestamps, event names, and revenue logic beat cosmetic cleanup every time. If your team is sorting through duplicate fields, missing labels, and inconsistent naming, this guide on how to clean up data is a practical place to start.

One connected question beats ten isolated dashboards

Founders often ask for a dashboard by department because that’s how the org chart looks. The better path is to center analysis on business questions.

For example:

“Which acquisition sources lead to the highest activation and lowest early churn?”

“Do accounts with more support tickets expand less?”

“Did release timing affect retention for new cohorts?”

Those answers require connected data, not prettier chart colors.

This is also the section where a tool can earn its keep. A platform such as Statspresso connects sources like Shopify, HubSpot, Linear, and Postgres so teams can ask plain-English questions and get charts back without writing SQL. That’s useful when the goal is self-serve analysis, not another reporting backlog.

Analysis Workflows You Can Use Today

The theory is straightforward. The daily work often presents challenges for organizations. Someone needs an answer. Nobody wants to open five tools, remember table joins, and spend the afternoon explaining why the metric definition changed.

The fix is to standardize a few core workflows that your startup will use constantly. For startups, these often fall into three buckets: growth, retention, and unit economics.

The old way and the new way of getting answers

Task | Old Way (Manual SQL & BI Tools) | New Way (Statspresso) |

|---|---|---|

Check monthly revenue trend | Ask analyst for a report, wait for query and chart build | Ask in plain English and get a chart in seconds |

Compare channel quality | Pull data from ad platform, CRM, and product tables, then reconcile manually | Ask for activation or conversion by source across connected data |

Review retention by cohort | Build or reuse cohort SQL, validate date logic, then export to BI | Request a cohort chart directly |

Investigate churn risk | Join support, billing, and product activity tables manually | Ask what behaviors changed before cancellation |

Share findings with team | Screenshot dashboard or send spreadsheet export | Share the generated chart and explanation |

Growth analysis

Growth analysis should answer one basic question: which inputs create useful customers, not just more traffic?

A lot of teams stop at top-of-funnel numbers because they’re easy to access. Clicks, signups, and sessions arrive quickly. The harder part is tracing quality through activation, conversion, and retention.

Useful growth workflow:

Start with source performance

Look at acquisition sources side by side, but don’t stop at volume.Add product qualification

Ask which sources produce users who complete the core action.Then check revenue outcome

Which channels lead to customers who stick or expand?

Try these prompts:

“Show signups, activation, and paid conversion by acquisition source for the last 90 days.”

“Which traffic sources bring users who complete our key product action within 7 days?”

“Compare revenue by first-touch source and last-touch source.”

AI-led analysis proves more useful than static reporting. Startups using AI-driven predictive analytics reduce market missteps by 30% to 50%, and AI models can process multivariate time-series data 100x faster than manual SQL queries, according to HubSpot’s discussion of AI data analysis for startups. In practice, that means the system can inspect patterns across behavior, timing, and conversion signals much faster than a person stitching queries together by hand.

Don’t ask, “Which channel has the most leads?” Ask, “Which channel produces users who become healthy customers?”

Retention analysis

Retention is where startup data analysis usually gets honest. Acquisition can flatter you for months. Retention tells you whether the product is worth returning to.

The most effective retention workflow is cohort-first. Group users by signup period, then compare how their activity changes over time. If later cohorts are weaker, something in onboarding, targeting, or product experience probably slipped.

Strong retention questions include:

What do retained users do early that churned users don’t?

Did product changes improve repeat behavior or just shift first-week activity?

Are there support or onboarding signals that appear before drop-off?

Copy-paste prompts:

“Show me a cohort chart of user retention by signup month.”

“Compare first-week behaviors between retained users and churned users.”

“Show support ticket categories for accounts that canceled within 60 days.”

“Which features are most used by customers who renew?”

Unstructured data matters here. Support tickets, NPS comments, community posts, and app reviews often show the reason before the metric shows the symptom. If multiple customers complain about the same limitation while still using the product heavily, that’s often a competitive gap worth quantifying.

A practical workflow is:

Pull cancellation, downgrade, or inactive-user segments.

Layer in ticket themes and feedback text.

Compare those themes against healthy accounts.

Prioritize fixes where complaint frequency and account value overlap.

That’s not glamorous BI. It’s how teams figure out what’s driving churn.

Unit economics analysis

Plenty of startup teams postpone unit economics because it sounds like finance homework. That’s a mistake. You don’t need a giant model. You need clarity on whether growth is getting more efficient or less.

Three lenses usually matter:

Acquisition cost versus downstream value

Time from acquisition to revenue

Segment differences in payback and churn risk

Useful prompts:

“Compare customer acquisition cost trends with conversion and churn by month.”

“Show average revenue per customer by acquisition source.”

“Which customer segments have the highest churn after discounting?”

“Break down expansion, contraction, and churn by account segment.”

When teams do this manually, the pain is predictable. Finance has one export. Marketing has another. Product data lives elsewhere. Dates don’t line up. Definitions drift. By the time the model is ready, nobody trusts it.

A workflow for idea validation and competitive gaps

There’s one workflow I wish more startups used early: analyzing customer complaints and feedback to spot underserved needs. Founders love “market research,” but many skip the richest source sitting inside their own operations.

Ask questions like:

“Summarize the most frequent complaint themes from support tickets in the last quarter.”

“Compare high-usage customers with low satisfaction feedback.”

“Which feature requests appear most often among retained users?”

“Group negative review text by theme and account type.”

That sort of analysis does two useful things. It helps you prioritize product work, and it shows where the market is still under-served. Startups often chase feature parity while customer text is practically begging them to fix a more painful workflow.

Common Data Pitfalls and How to Sidestep Them

Most startup data problems aren’t caused by missing tools. They come from bad habits with nice charts wrapped around them.

Vanity metrics

Total signups, raw pageviews, total followers. They look good in a slide. They rarely tell you what to do next.

The antidote is simple. Tie every metric to a meaningful behavior or business outcome. Instead of “how many users signed up,” ask “how many users signed up and completed the action that predicts value?”

A useful check is this: if the number doubles tomorrow, would you celebrate without asking a follow-up question? If yes, it might be vanity.

Analysis paralysis

Some teams stall because they want perfect tracking, perfect definitions, and perfect confidence before acting. Startups don’t get that luxury.

Use a decision threshold instead. Ask, “Do we have enough signal to choose the next experiment, cut the weak channel, or prioritize the fix?” If yes, move.

Good startup analysis narrows uncertainty enough to act. It doesn’t eliminate uncertainty.

Confirmation bias

Founders are especially vulnerable here because conviction is part of the job. The danger starts when you only ask questions that can confirm your favorite theory.

Counter it by forcing one disconfirming question into the workflow. If you think a feature improves retention, ask what happened to the users who adopted it late, or whether the same pattern appears in segments you didn’t expect.

Inconsistent definitions

Marketing says a customer is a lead who booked a demo. Product says it’s someone who activated. Finance says it’s a paying account. None of them are wrong. They’re just answering different questions.

Fix this by naming the business object precisely. Paying customer. Activated workspace. Closed-won account. Trial user. Clean labels save hours of fake disagreement.

False confidence from tiny samples

Not every change means something real. That’s where a basic grasp of experiment design matters. If your team is running product or landing page tests, a practical explainer on testing statistical significance is worth sharing internally so people stop declaring victory after one noisy result.

Dashboard sprawl

The final trap is building too many dashboards because nobody wants to decide which few matter. That creates a scavenger hunt, not clarity.

A cleaner approach:

Keep one operating scorecard with the small set of metrics your team uses weekly

Archive dead dashboards instead of pretending they’re still relevant

Default to questions first when someone asks for a new chart

Require an owner for any core metric

If nobody owns the number, nobody will notice when it breaks.

Your Action Plan From Data to Decision

You don’t need a crash course in SQL. You need a simpler operating habit. Ask better questions, connect the right sources, and shorten the time between confusion and action.

TL;DR

Start with the core bottleneck If your team waits days or weeks for basic answers, the problem isn’t curiosity. It’s access.

Treat startup data analysis as question-driven

Good analysis starts with prompts like “why did retention drop?” or “which channels bring users who activate?” Not “which dashboard should we build?”Match metrics to your stage

Early teams need validation and engagement signals. Later teams need efficiency, retention depth, and clearer operating metrics.Centralize only the data that matters now

Connect the systems that define acquisition, product usage, revenue, and support. Don’t boil the ocean.Use repeatable workflows

Growth, retention, and unit economics cover most of the decisions startups make every week.Watch for self-inflicted mistakes

Vanity metrics, definition drift, analysis paralysis, and dashboard clutter waste more time than missing software.Use conversational analytics where it removes friction

Skip the SQL. Just ask your data a question and get a chart in seconds.Start smaller than you think

One source. One question. One useful chart. That’s enough to change how a team works.

Try asking your data right now: “Show me my revenue by month for the last year as a bar chart.”

Then ask a second question based on what you see. That’s the whole game. Faster learning beats prettier reporting.

Connect your first data source in Statspresso and ask your first question in plain English. If your startup already has the data but not the time to wrangle SQL, that’s the fastest path from scattered numbers to a chart your team can use.

You’ve got questions piling up right now. Which channel is bringing customers who stick? Where is churn starting? Why did conversion dip after the last release? The answers exist, but they’re trapped in Postgres, HubSpot, Shopify, Stripe, spreadsheets, and one overworked analyst’s queue.

That bottleneck isn’t normal. It’s just old. Waiting two weeks for a dashboard that’s already stale by the time it lands is a relic. Startup data analysis should mean fast answers to urgent business questions, not tickets, exports, and SQL archaeology.

Stop Waiting for Data and Start Making Decisions

Most founders treat slow analytics like bad weather. Annoying, but unavoidable. It isn’t.

The cost of delay is brutal in startups because the margin for error is tiny. About 90% of startups fail overall, and the leading causes map directly to missing or late analysis: 42% fail because there’s no market need, and 29% run out of funding according to Exploding Topics startup statistics. If you learn too slowly, you validate the wrong thing, spend on the wrong thing, or keep a weak motion alive for too long.

That’s why I get impatient with the old playbook. A founder asks for a retention breakdown. Someone exports CSVs. Someone else writes SQL. A chart appears after three rounds of clarification. By then, the product team has already shipped something else and marketing has changed the campaign setup.

Practical rule: If your answer arrives after the decision window closed, you don’t have analytics. You have reporting.

The better model is simple. Ask a business question in plain English. Let the system translate that into the query, pull the data, and return a chart or number immediately. That is startup data analysis in practice. Not a museum exhibit of dashboards no one trusts.

A lot of teams still assume self-serve analytics means teaching everyone SQL or buying a bigger BI tool. It usually means the opposite. Fewer layers. Fewer handoffs. Less ceremony between question and answer.

What Startup Data Analysis Actually Means in 2026

“Startup data analysis” sounds more technical than it is. Strip away the jargon and it comes down to this: ask a business question, use your own data, get an answer you can act on.

That matters because the volume problem is getting worse, not better. Americans started 5.5 million businesses in 2023, and 2.5 quintillion bytes of data are generated daily. Startups using AI-powered analytics see productivity gains of up to 63% according to High5’s startup statistics roundup. Founders aren’t short on data. They’re short on time and clear interpretation.

It’s a conversation, not a reporting project

The old setup forced everyone to speak through a translator. You had to know SQL, understand schemas, and remember which table held the “real” subscription status. A human analyst sat in the middle and converted business questions into database logic.

That still works. It’s just slow.

A Conversational AI Data Analyst changes the interaction model. You ask, “Show me trial-to-paid conversion by signup month.” The system handles the translation, pulls from the connected sources, and returns the result as a chart, table, or summary. That’s Conversational Analytics in plain terms.

The useful definition of startup data analysis

If you’re a founder, PM, or growth lead, startup data analysis isn’t “doing BI.” It’s asking things like:

Acquisition questions such as which channels bring users who complete a key action

Product questions such as where activation stalls after signup

Revenue questions such as which customer segments expand fastest

Operations questions such as whether support load spikes after a release

None of those require you to become a data engineer. They require access, context, and a way to ask clearly.

Startup teams rarely need more dashboards first. They need fewer obstacles between curiosity and evidence.

What Automated BI looks like in real life

Good Automated BI doesn’t dump another dashboard folder on your team. It does three things well:

Connects scattered sources

Your product usage might live in Postgres. Leads sit in HubSpot. Orders come through Shopify. Tickets live somewhere else. Analysis only gets useful when those systems can be queried together.Translates plain English into analysis

This is the part non-technical teams care about most. Not because SQL is evil, but because writing and debugging queries is the wrong use of time for most operating teams.Returns answers in a usable format

A result should come back as something people can immediately discuss. A cohort chart. A funnel. A trend line. A short explanation.

Try asking your analytics tool: “Show me weekly active users who completed our core action over the last 12 weeks.”

Then ask: “Break that down by signup channel.”

That’s a proper startup workflow. One question leads to the next. You follow the signal instead of waiting for someone to package it for you.

What doesn’t count

A few things get mistaken for startup data analysis all the time:

Dashboard hoarding where every team gets twenty charts and no one knows which one matters

Spreadsheet relays where people re-export the same numbers every Monday

Metric tourism where leaders peek at graphs but don’t tie them to decisions

Tool collecting where buying software substitutes for deciding what questions matter

Real analysis reduces uncertainty around a decision. If it doesn’t help someone choose, prioritize, cut, or double down, it’s decoration.

Match Your Metrics to Your Startup Stage

Most startup metrics advice is written as if every company should track the same things from day one. That’s how teams end up obsessing over polished board metrics before they’ve proven anyone cares.

The useful way to approach startup data analysis is by stage. Early on, you’re looking for evidence of need and usage. Later, you care more about efficiency, repeatability, and scaling what already works.

Pre-seed and seed

At this stage, the dangerous mistake is tracking polished metrics that assume a stable business model. You probably don’t have one yet.

The right questions are messier:

Are new users reaching the first moment of value?

Do they come back without being chased?

Which user behaviors show genuine pull, not polite curiosity?

What are people complaining about in support, onboarding calls, or feedback forms?

You’re looking for engagement signals and product-market fit clues, not elegant financial storytelling.

A founder at this stage should care more about patterns like “users who import data in the first session return next week” than broad totals like signups. Total signups are easy to inflate. Meaningful usage is harder to fake.

Try asking your analytics tool:

“Show me the percentage of new users who completed our activation event within 7 days.”

“Chart retention by signup week.”

“List the most common themes in support tickets from new users.”

That last one matters more than many teams admit. Support tickets, review text, and survey answers often reveal unmet needs and competitive gaps long before a dashboard does.

Early stage after initial traction

Once usage looks real and revenue starts to matter consistently, your questions change. You’re no longer asking only “do people want this?” You’re asking, “can we grow this without lighting cash on fire?”

Startup data analysis requires connecting product, marketing, and revenue systems. You want to see whether the users you acquire are becoming the customers you hoped for.

The metric categories get sharper:

Acquisition efficiency

Which channels bring users who activate, retain, and convert, not just click?Retention and cohort behavior

Are newer cohorts healthier than older ones? If not, your “growth” may just be a bigger top of funnel hiding a leaky product.Sales and funnel movement

Where do opportunities stall? Do certain segments close faster? Does deal quality differ by source?Cash discipline

You don’t need a wall-sized finance dashboard. You do need a clear view of what’s moving revenue and what’s draining attention.

A startup with traction can still die from blurry feedback loops. Growth hides mistakes for a while. Then the bill arrives.

Useful prompts here look like:

“Show conversion from lead to customer by acquisition source over time.”

“Create a cohort chart of retained users by signup month.”

“Compare churn patterns between customers acquired through paid channels and referrals.”

“Show product usage in the 30 days before cancellation.”

Those questions are better than asking for “the KPI dashboard.” They force the analysis toward choices.

Growth stage

By the time a startup has multiple teams, more channels, and a real operating rhythm, the problem changes again. It’s less about whether data exists and more about whether everyone means the same thing when they say “customer,” “active,” “churn,” or “revenue.”

At this point, the strongest metric set is usually small and cross-functional. A giant dashboard library creates a false sense of control. Teams need a handful of trusted indicators with clear owners.

Three habits matter:

Choose metrics tied to decisions

If no one changes behavior when the number moves, it probably shouldn’t sit in your core set.Keep one stage-specific scorecard

Not a dashboard graveyard. A compact operating view.Use prompts that compare, segment, and explain

“What changed?” is more useful than “what happened?”

Try prompts like:

“Show month-over-month expansion revenue by customer segment.”

“Which product actions correlate with renewal?”

“Compare support volume and churn after the last three product releases.”

The stage mismatch that wastes the most time

I see the same failure pattern constantly. A seed company copies a mature SaaS metric stack. They debate definitions, build dashboards, and monitor numbers that don’t guide a current decision.

Meanwhile, key questions sit unanswered:

Why do users drop after signup?

Which customer type gets value fastest?

What objection appears over and over in calls?

Which feature gets used once and never again?

Startup data analysis works when the questions are narrow enough to matter right now. If your team can’t name the next decision a metric should inform, don’t build the metric yet.

For more technical teams that still need SQL examples alongside self-serve analysis, an internal guide like The Guide to SQL Charting can help bridge that gap without turning every question into a custom reporting project.

Getting Your Data House in Order for Analysis

You can’t answer cross-functional questions if every system lives on its own little island. Product has one dataset. Marketing has another. Sales trusts the CRM. Finance trusts exports. Everyone claims they’re “data-driven,” and then the meeting turns into a debate about whose spreadsheet is least wrong.

That’s why the first job in startup data analysis is boring but unavoidable. Get the data into one place people can use.

Why ELT replaced old-school ETL

If you’ve heard both terms and mentally filed them under “annoying data plumbing,” fair enough. The practical difference is simple.

With ETL, teams try to clean and transform data before loading it into the warehouse. That sounds tidy. In practice, it often slows everything down.

With ELT, you extract the data, load it first, and transform it after. That approach fits startup speed much better. The ELT pipeline has become the standard for agile startups and can reduce data latency by 70% to 90% compared with older ETL methods, according to Weld’s guide to startup data analytics.

That matters because your questions don’t stay in one department. Once Shopify, HubSpot, Postgres, and other tools land in a central warehouse, you can ask things the old setup made painful:

Did customer support volume change after a release?

Which campaign source produces users who retain?

What happens to expansion revenue after a drop in product usage?

What a sane startup stack looks like

You do not need a miniature enterprise architecture diagram taped to the wall.

A workable setup usually has these pieces:

Operational sources like Postgres, HubSpot, Shopify, Stripe, or Linear

A warehouse where the raw data lands

A light modeling layer so core fields and business definitions make sense

An analytics interface that non-technical people can use without filing tickets

For some teams, outside help is useful during setup. If you need guidance on architecture or governance, reviews of data science consulting firms can help you compare the kinds of partners that support early-stage analytics builds.

The trap is overbuilding. Founders hear “single source of truth” and assume they need to model every object, event, and edge case before anyone can ask a question. They don’t. Start with the handful of systems that define customer acquisition, product usage, and revenue.

Centralization is not about collecting everything. It’s about connecting the few sources that answer your most expensive questions.

Instrument only what you’ll use

Tracking plans get bloated fast. Someone suggests logging every click, every hover, every page interaction, every background task. Then no one maintains the definitions, and six months later the event stream becomes landfill.

Track the actions that map to your business model. If you’re a SaaS product, that usually means a few core moments:

Signup and activation events

Key product actions tied to value

Subscription or purchase events

Cancellation or downgrade signals

Support and feedback touchpoints

When the raw data is messy, clean the highest-value fields first. Customer IDs, timestamps, event names, and revenue logic beat cosmetic cleanup every time. If your team is sorting through duplicate fields, missing labels, and inconsistent naming, this guide on how to clean up data is a practical place to start.

One connected question beats ten isolated dashboards

Founders often ask for a dashboard by department because that’s how the org chart looks. The better path is to center analysis on business questions.

For example:

“Which acquisition sources lead to the highest activation and lowest early churn?”

“Do accounts with more support tickets expand less?”

“Did release timing affect retention for new cohorts?”

Those answers require connected data, not prettier chart colors.

This is also the section where a tool can earn its keep. A platform such as Statspresso connects sources like Shopify, HubSpot, Linear, and Postgres so teams can ask plain-English questions and get charts back without writing SQL. That’s useful when the goal is self-serve analysis, not another reporting backlog.

Analysis Workflows You Can Use Today

The theory is straightforward. The daily work often presents challenges for organizations. Someone needs an answer. Nobody wants to open five tools, remember table joins, and spend the afternoon explaining why the metric definition changed.

The fix is to standardize a few core workflows that your startup will use constantly. For startups, these often fall into three buckets: growth, retention, and unit economics.

The old way and the new way of getting answers

Task | Old Way (Manual SQL & BI Tools) | New Way (Statspresso) |

|---|---|---|

Check monthly revenue trend | Ask analyst for a report, wait for query and chart build | Ask in plain English and get a chart in seconds |

Compare channel quality | Pull data from ad platform, CRM, and product tables, then reconcile manually | Ask for activation or conversion by source across connected data |

Review retention by cohort | Build or reuse cohort SQL, validate date logic, then export to BI | Request a cohort chart directly |

Investigate churn risk | Join support, billing, and product activity tables manually | Ask what behaviors changed before cancellation |

Share findings with team | Screenshot dashboard or send spreadsheet export | Share the generated chart and explanation |

Growth analysis

Growth analysis should answer one basic question: which inputs create useful customers, not just more traffic?

A lot of teams stop at top-of-funnel numbers because they’re easy to access. Clicks, signups, and sessions arrive quickly. The harder part is tracing quality through activation, conversion, and retention.

Useful growth workflow:

Start with source performance

Look at acquisition sources side by side, but don’t stop at volume.Add product qualification

Ask which sources produce users who complete the core action.Then check revenue outcome

Which channels lead to customers who stick or expand?

Try these prompts:

“Show signups, activation, and paid conversion by acquisition source for the last 90 days.”

“Which traffic sources bring users who complete our key product action within 7 days?”

“Compare revenue by first-touch source and last-touch source.”

AI-led analysis proves more useful than static reporting. Startups using AI-driven predictive analytics reduce market missteps by 30% to 50%, and AI models can process multivariate time-series data 100x faster than manual SQL queries, according to HubSpot’s discussion of AI data analysis for startups. In practice, that means the system can inspect patterns across behavior, timing, and conversion signals much faster than a person stitching queries together by hand.

Don’t ask, “Which channel has the most leads?” Ask, “Which channel produces users who become healthy customers?”

Retention analysis

Retention is where startup data analysis usually gets honest. Acquisition can flatter you for months. Retention tells you whether the product is worth returning to.

The most effective retention workflow is cohort-first. Group users by signup period, then compare how their activity changes over time. If later cohorts are weaker, something in onboarding, targeting, or product experience probably slipped.

Strong retention questions include:

What do retained users do early that churned users don’t?

Did product changes improve repeat behavior or just shift first-week activity?

Are there support or onboarding signals that appear before drop-off?

Copy-paste prompts:

“Show me a cohort chart of user retention by signup month.”

“Compare first-week behaviors between retained users and churned users.”

“Show support ticket categories for accounts that canceled within 60 days.”

“Which features are most used by customers who renew?”

Unstructured data matters here. Support tickets, NPS comments, community posts, and app reviews often show the reason before the metric shows the symptom. If multiple customers complain about the same limitation while still using the product heavily, that’s often a competitive gap worth quantifying.

A practical workflow is:

Pull cancellation, downgrade, or inactive-user segments.

Layer in ticket themes and feedback text.

Compare those themes against healthy accounts.

Prioritize fixes where complaint frequency and account value overlap.

That’s not glamorous BI. It’s how teams figure out what’s driving churn.

Unit economics analysis

Plenty of startup teams postpone unit economics because it sounds like finance homework. That’s a mistake. You don’t need a giant model. You need clarity on whether growth is getting more efficient or less.

Three lenses usually matter:

Acquisition cost versus downstream value

Time from acquisition to revenue

Segment differences in payback and churn risk

Useful prompts:

“Compare customer acquisition cost trends with conversion and churn by month.”

“Show average revenue per customer by acquisition source.”

“Which customer segments have the highest churn after discounting?”

“Break down expansion, contraction, and churn by account segment.”

When teams do this manually, the pain is predictable. Finance has one export. Marketing has another. Product data lives elsewhere. Dates don’t line up. Definitions drift. By the time the model is ready, nobody trusts it.

A workflow for idea validation and competitive gaps

There’s one workflow I wish more startups used early: analyzing customer complaints and feedback to spot underserved needs. Founders love “market research,” but many skip the richest source sitting inside their own operations.

Ask questions like:

“Summarize the most frequent complaint themes from support tickets in the last quarter.”

“Compare high-usage customers with low satisfaction feedback.”

“Which feature requests appear most often among retained users?”

“Group negative review text by theme and account type.”

That sort of analysis does two useful things. It helps you prioritize product work, and it shows where the market is still under-served. Startups often chase feature parity while customer text is practically begging them to fix a more painful workflow.

Common Data Pitfalls and How to Sidestep Them

Most startup data problems aren’t caused by missing tools. They come from bad habits with nice charts wrapped around them.

Vanity metrics

Total signups, raw pageviews, total followers. They look good in a slide. They rarely tell you what to do next.

The antidote is simple. Tie every metric to a meaningful behavior or business outcome. Instead of “how many users signed up,” ask “how many users signed up and completed the action that predicts value?”

A useful check is this: if the number doubles tomorrow, would you celebrate without asking a follow-up question? If yes, it might be vanity.

Analysis paralysis

Some teams stall because they want perfect tracking, perfect definitions, and perfect confidence before acting. Startups don’t get that luxury.

Use a decision threshold instead. Ask, “Do we have enough signal to choose the next experiment, cut the weak channel, or prioritize the fix?” If yes, move.

Good startup analysis narrows uncertainty enough to act. It doesn’t eliminate uncertainty.

Confirmation bias

Founders are especially vulnerable here because conviction is part of the job. The danger starts when you only ask questions that can confirm your favorite theory.

Counter it by forcing one disconfirming question into the workflow. If you think a feature improves retention, ask what happened to the users who adopted it late, or whether the same pattern appears in segments you didn’t expect.

Inconsistent definitions

Marketing says a customer is a lead who booked a demo. Product says it’s someone who activated. Finance says it’s a paying account. None of them are wrong. They’re just answering different questions.

Fix this by naming the business object precisely. Paying customer. Activated workspace. Closed-won account. Trial user. Clean labels save hours of fake disagreement.

False confidence from tiny samples

Not every change means something real. That’s where a basic grasp of experiment design matters. If your team is running product or landing page tests, a practical explainer on testing statistical significance is worth sharing internally so people stop declaring victory after one noisy result.

Dashboard sprawl

The final trap is building too many dashboards because nobody wants to decide which few matter. That creates a scavenger hunt, not clarity.

A cleaner approach:

Keep one operating scorecard with the small set of metrics your team uses weekly

Archive dead dashboards instead of pretending they’re still relevant

Default to questions first when someone asks for a new chart

Require an owner for any core metric

If nobody owns the number, nobody will notice when it breaks.

Your Action Plan From Data to Decision

You don’t need a crash course in SQL. You need a simpler operating habit. Ask better questions, connect the right sources, and shorten the time between confusion and action.

TL;DR

Start with the core bottleneck If your team waits days or weeks for basic answers, the problem isn’t curiosity. It’s access.

Treat startup data analysis as question-driven

Good analysis starts with prompts like “why did retention drop?” or “which channels bring users who activate?” Not “which dashboard should we build?”Match metrics to your stage

Early teams need validation and engagement signals. Later teams need efficiency, retention depth, and clearer operating metrics.Centralize only the data that matters now

Connect the systems that define acquisition, product usage, revenue, and support. Don’t boil the ocean.Use repeatable workflows

Growth, retention, and unit economics cover most of the decisions startups make every week.Watch for self-inflicted mistakes

Vanity metrics, definition drift, analysis paralysis, and dashboard clutter waste more time than missing software.Use conversational analytics where it removes friction

Skip the SQL. Just ask your data a question and get a chart in seconds.Start smaller than you think

One source. One question. One useful chart. That’s enough to change how a team works.

Try asking your data right now: “Show me my revenue by month for the last year as a bar chart.”

Then ask a second question based on what you see. That’s the whole game. Faster learning beats prettier reporting.

Connect your first data source in Statspresso and ask your first question in plain English. If your startup already has the data but not the time to wrangle SQL, that’s the fastest path from scattered numbers to a chart your team can use.